1、traefik

traefik:HTTP层路由,官网:http://traefik.cn/,文档:https://docs.traefik.io/user-guide/kubernetes/

功能和nginx ingress类似。

相对于nginx ingress,traefix能够实时跟Kubernetes API 交互,感知后端 Service、Pod 变化,自动更新配置并热重载。Traefik 更快速更方便,同时支持更多的特性,使反向代理、负载均衡更直接更高效。

k8s集群部署Traefik,结合上一篇文章。

创建k8s-master-lb的证书:

[root@k8s-master01 ~]# openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout tls.key -out tls.crt -subj "/CN=k8s-master-lb"

Generating a 2048 bit RSA private key

................................................................................................................+++

.........................................................................................................................................................+++

writing new private key to 'tls.key'把证书写入到k8s的secret

[root@k8s-master01 ~]# kubectl -n kube-system create secret generic traefik-cert --from-file=tls.key --from-file=tls.crt

secret/traefik-cert created安装traefix

[root@k8s-master01 kubeadm-ha]# kubectl apply -f traefik/

serviceaccount/traefik-ingress-controller created

clusterrole.rbac.authorization.k8s.io/traefik-ingress-controller created

clusterrolebinding.rbac.authorization.k8s.io/traefik-ingress-controller created

configmap/traefik-conf created

daemonset.extensions/traefik-ingress-controller created

service/traefik-web-ui created

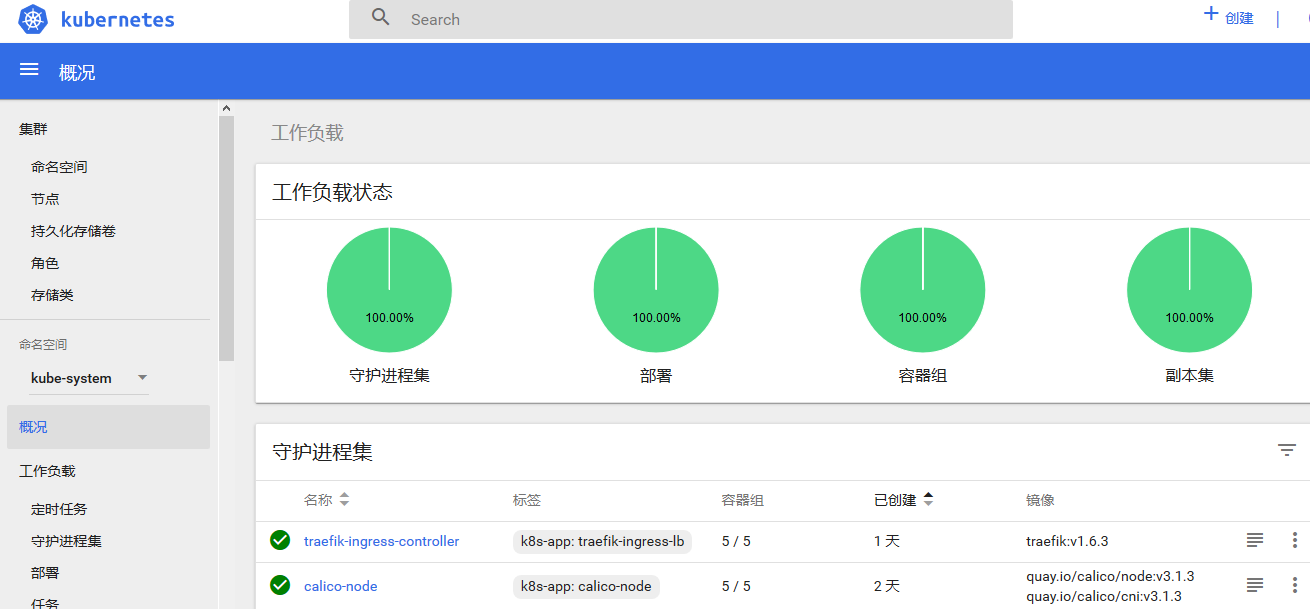

ingress.extensions/traefik-jenkins created查看pods,因为创建的类型是DaemonSet所有每个节点都会创建一个Traefix的pod

[root@k8s-master01 kubeadm-ha]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-node-kwz9t 2/2 Running 0 20h

kube-system calico-node-nfhrd 2/2 Running 0 56m

kube-system calico-node-nxtlf 2/2 Running 0 57m

kube-system calico-node-rj8p8 2/2 Running 0 20h

kube-system calico-node-xfsg5 2/2 Running 0 20h

kube-system coredns-777d78ff6f-4rcsb 1/1 Running 0 22h

kube-system coredns-777d78ff6f-7xqzx 1/1 Running 0 22h

kube-system etcd-k8s-master01 1/1 Running 0 16h

kube-system etcd-k8s-master02 1/1 Running 0 21h

kube-system etcd-k8s-master03 1/1 Running 9 20h

kube-system heapster-5874d498f5-ngk26 1/1 Running 0 16h

kube-system kube-apiserver-k8s-master01 1/1 Running 0 16h

kube-system kube-apiserver-k8s-master02 1/1 Running 0 20h

kube-system kube-apiserver-k8s-master03 1/1 Running 1 20h

kube-system kube-controller-manager-k8s-master01 1/1 Running 0 16h

kube-system kube-controller-manager-k8s-master02 1/1 Running 1 20h

kube-system kube-controller-manager-k8s-master03 1/1 Running 0 20h

kube-system kube-proxy-4cjhm 1/1 Running 0 22h

kube-system kube-proxy-kpxhz 1/1 Running 0 56m

kube-system kube-proxy-lkvjk 1/1 Running 2 21h

kube-system kube-proxy-m7htq 1/1 Running 0 22h

kube-system kube-proxy-r4sjs 1/1 Running 0 57m

kube-system kube-scheduler-k8s-master01 1/1 Running 2 16h

kube-system kube-scheduler-k8s-master02 1/1 Running 0 21h

kube-system kube-scheduler-k8s-master03 1/1 Running 2 20h

kube-system kubernetes-dashboard-7954d796d8-2k4hx 1/1 Running 0 17h

kube-system metrics-server-55fcc5b88-bpmkm 1/1 Running 0 16h

kube-system monitoring-grafana-9b6b75b49-4zm6d 1/1 Running 0 18h

kube-system monitoring-influxdb-655cd78874-56gf8 1/1 Running 0 16h

kube-system traefik-ingress-controller-cv2jg 1/1 Running 0 28s

kube-system traefik-ingress-controller-d7lzw 1/1 Running 0 28s

kube-system traefik-ingress-controller-r2z29 1/1 Running 0 28s

kube-system traefik-ingress-controller-tm6vv 1/1 Running 0 28s

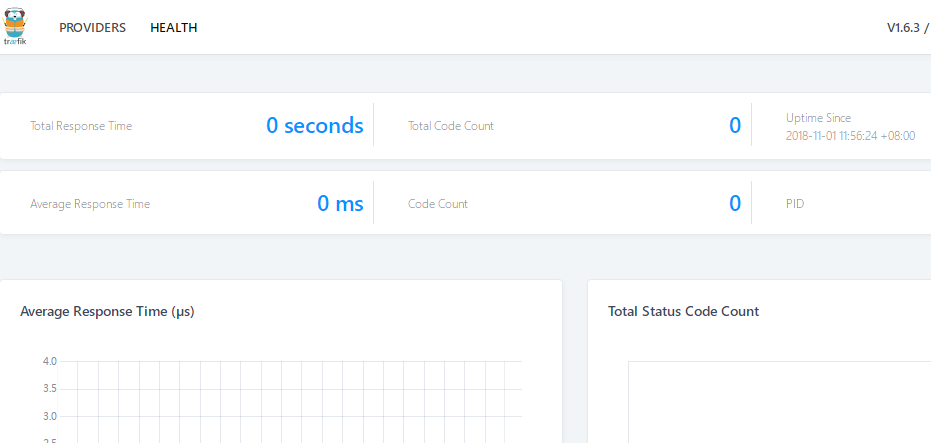

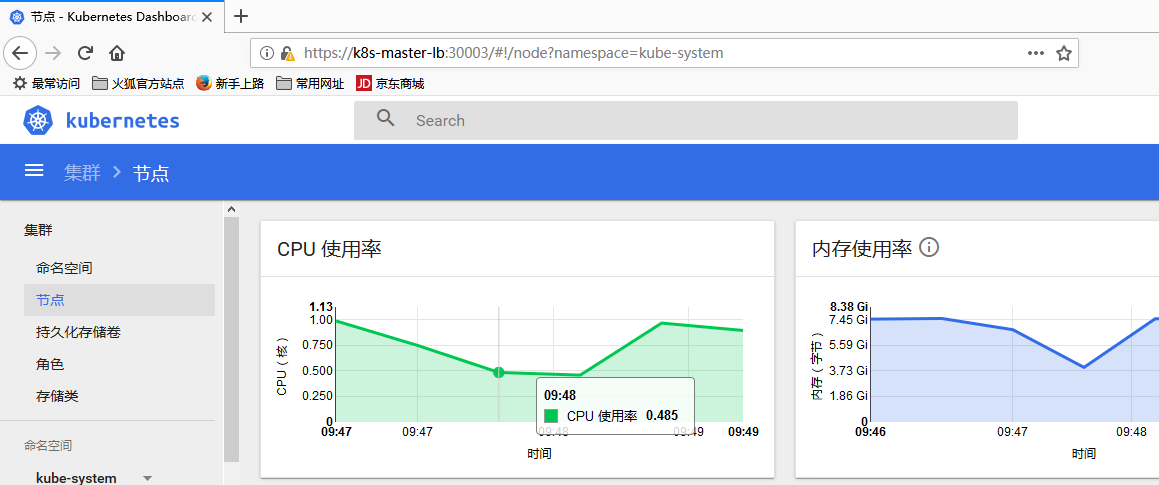

kube-system traefik-ingress-controller-w4mj7 1/1 Running 0 28s打开Traefix的Web UI:http://k8s-master-lb:30011/

创建测试web应用

[root@k8s-master01 ~]# cat traefix-test.yaml

apiVersion: v1

kind: Service

metadata:

name: nginx-svc

spec:

template:

metadata:

labels:

name: nginx-svc

namespace: traefix-test

spec:

selector:

run: ngx-pod

ports:

- protocol: TCP

port: 80

targetPort: 80

---

apiVersion: apps/v1beta1

kind: Deployment

metadata:

name: ngx-pod

spec:

replicas: 4

template:

metadata:

labels:

run: ngx-pod

spec:

containers:

- name: nginx

image: nginx:1.10

ports:

- containerPort: 80

---

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: ngx-ing

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: traefix-test.com

http:

paths:

- backend:

serviceName: nginx-svc

servicePort: 80[root@k8s-master01 ~]# kubectl create -f traefix-test.yaml

service/nginx-svc created

deployment.apps/ngx-pod created

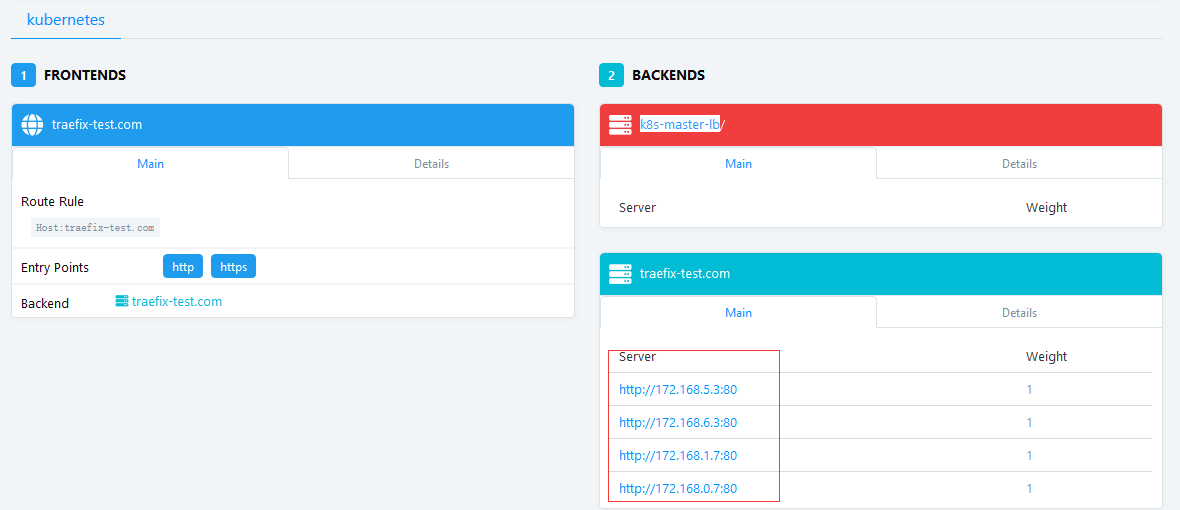

ingress.extensions/ngx-ing createdtraefix UI查看

k8s查看

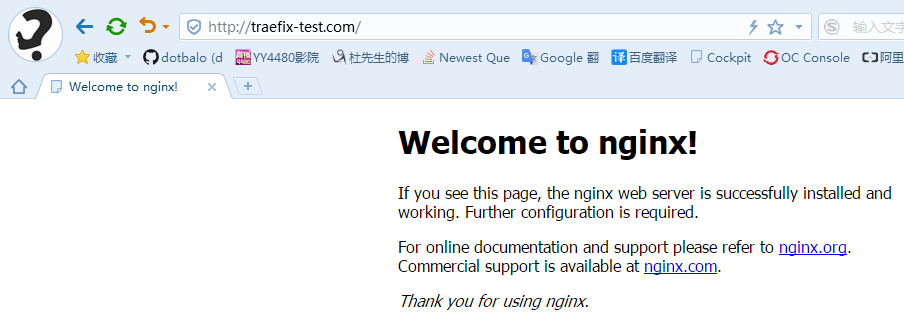

访问测试:将域名http://traefix-test.com/解析到任何一个node节点即可访问

HTTPS证书配置

利用上述创建的nginx,再次创建https的ingress

[root@k8s-master01 nginx-cert]# cat ../traefix-https.yaml

apiVersion: extensions/v1beta1

kind: Ingress

metadata:

name: nginx-https-test

namespace: default

annotations:

kubernetes.io/ingress.class: traefik

spec:

rules:

- host: traefix-test.com

http:

paths:

- backend:

serviceName: nginx-svc

servicePort: 80

tls:

- secretName: nginx-test-tls创建证书,线上为公司购买的证书

[root@k8s-master01 nginx-cert]# openssl req -x509 -nodes -days 365 -newkey rsa:2048 -keyout tls.key -out tls.crt -subj "/CN=traefix-test.com"

Generating a 2048 bit RSA private key

.................................+++

.........................................................+++

writing new private key to 'tls.key'

-----导入证书

kubectl -n default create secret tls nginx-test-tls --key=tls.key --cert=tls.crt创建ingress

[root@k8s-master01 ~]# kubectl create -f traefix-https.yaml

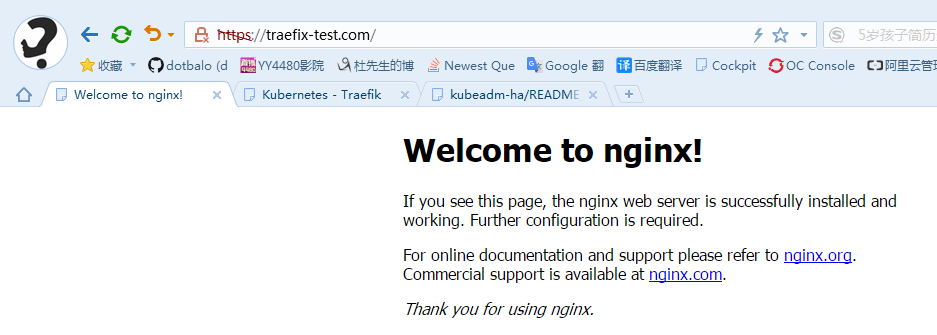

ingress.extensions/nginx-https-test created访问测试:

其他方法查看官方文档:https://docs.traefik.io/user-guide/kubernetes/

2、安装prometheus

安装prometheus

[root@k8s-master01 kubeadm-ha]# kubectl apply -f prometheus/

clusterrole.rbac.authorization.k8s.io/prometheus created

clusterrolebinding.rbac.authorization.k8s.io/prometheus created

configmap/prometheus-server-conf created

deployment.extensions/prometheus created

service/prometheus createdpod查看

[root@k8s-master01 kubeadm-ha]# kubectl get pods --all-namespaces | grep prome

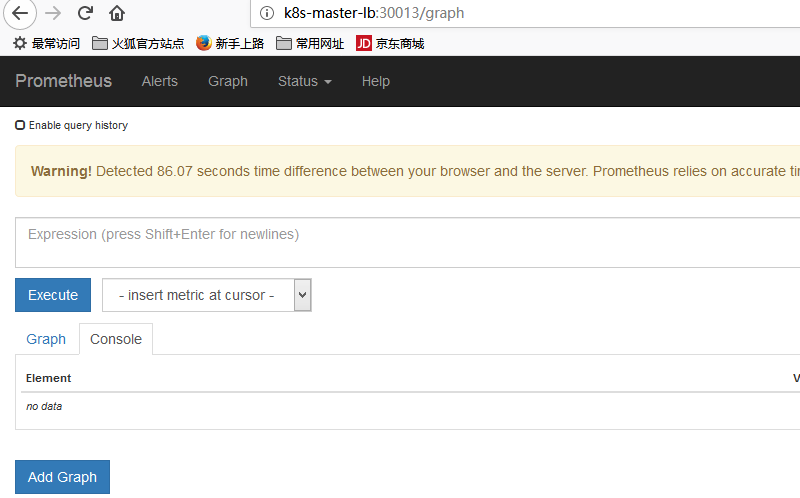

kube-system prometheus-56dff8579d-x2w62 1/1 Running 0 52s访问地址:http://k8s-master-lb:30013/

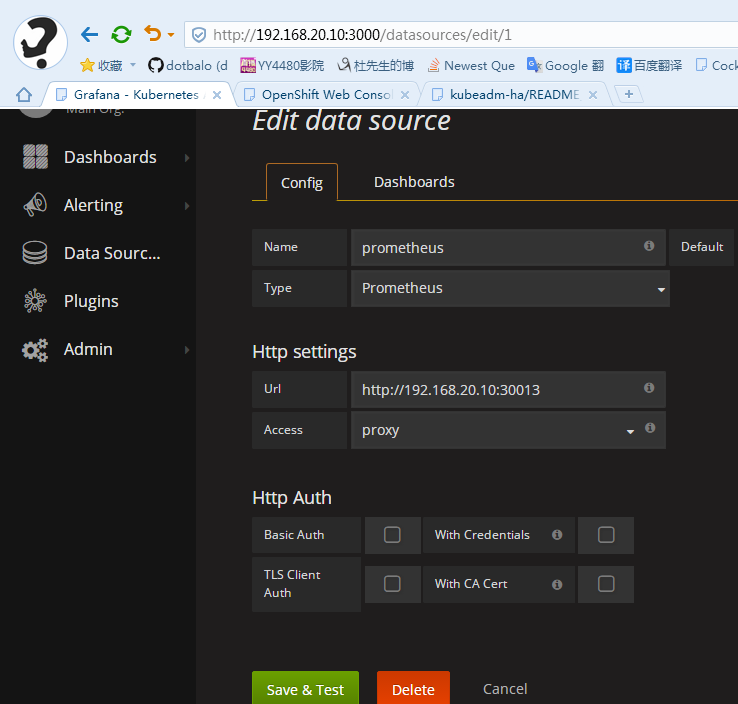

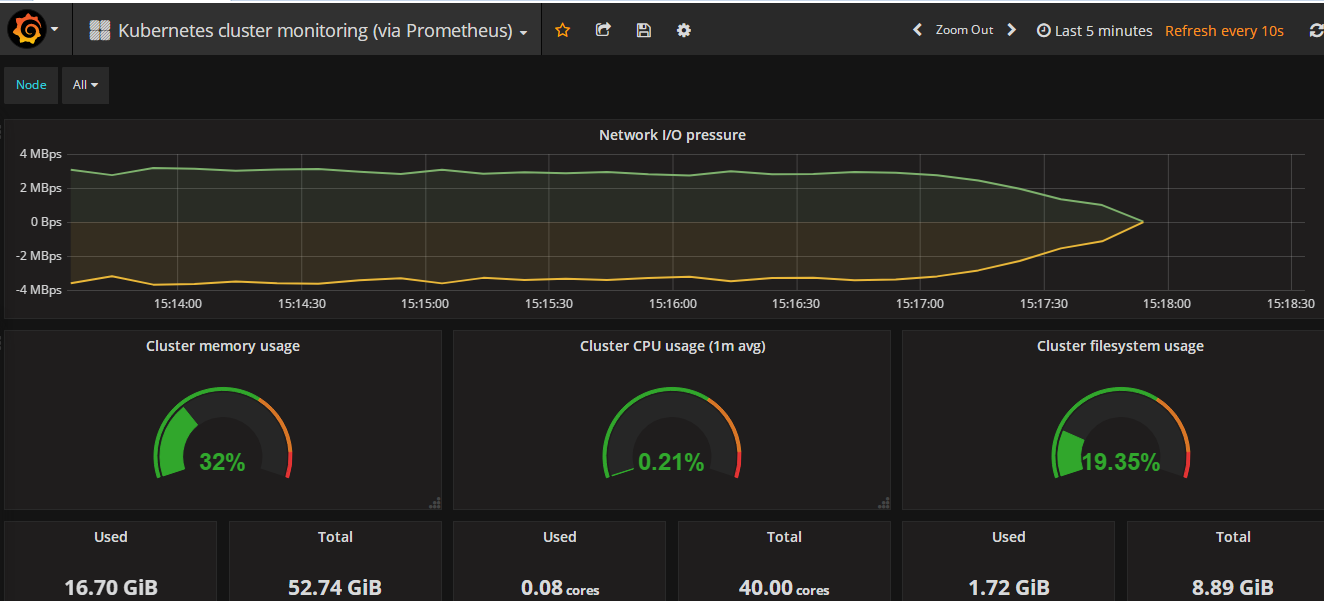

安装使用grafana(此处使用自装grafana)

yum install https://s3-us-west-2.amazonaws.com/grafana-releases/release/grafana-4.4.3-1.x86_64.rpm -y启动grafana

[root@k8s-master01 grafana-dashboard]# systemctl start grafana-server

[root@k8s-master01 grafana-dashboard]# systemctl enable grafana-server

Created symlink from /etc/systemd/system/multi-user.target.wants/grafana-server.service to /usr/lib/systemd/system/grafana-server.service.访问:http://192.168.20.20:3000,账号密码admin,配置prometheus的DataSource

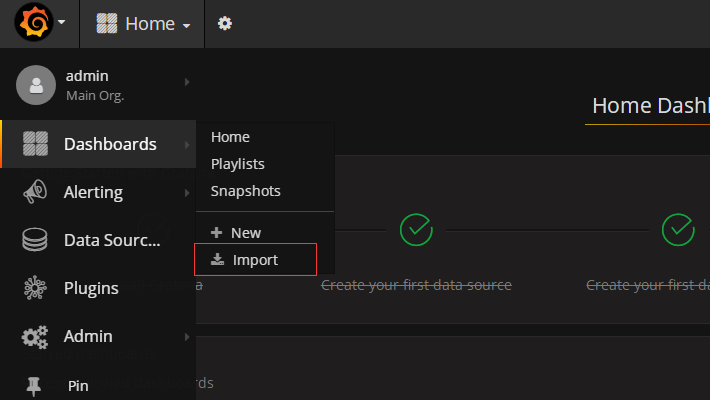

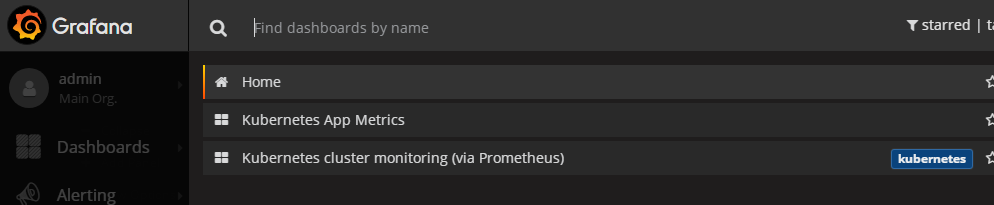

导入模板:文件路径/root/kubeadm-ha/heapster/grafana-dashboard

导入后如下

查看数据

grafana文档:http://docs.grafana.org/

3、集群验证

验证集群高可用

创建一个副本为3的deployment

[root@k8s-master01 ~]# kubectl run nginx --image=nginx --replicas=3 --port=80

deployment.apps/nginx created

[root@k8s-master01 ~]# kubectl get deployment --all-namespaces

NAMESPACE NAME DESIRED CURRENT UP-TO-DATE AVAILABLE AGE

default nginx 3 3 3 3 58s查看pods

[root@k8s-master01 ~]# kubectl get pods -l=run=nginx -o wide

NAME READY STATUS RESTARTS AGE IP NODE

nginx-6f858d4d45-7lv6f 1/1 Running 0 1m 172.168.5.16 k8s-node01

nginx-6f858d4d45-g2njj 1/1 Running 0 1m 172.168.0.18 k8s-master01

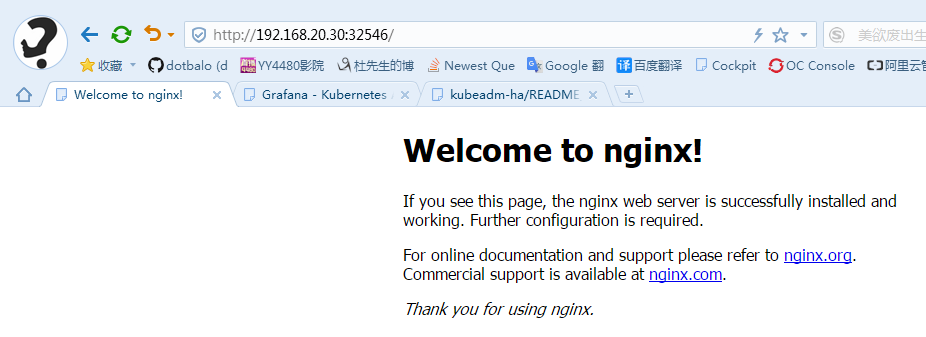

nginx-6f858d4d45-rcz89 1/1 Running 0 1m 172.168.6.12 k8s-node02创建service

[root@k8s-master01 ~]# kubectl expose deployment nginx --type=NodePort --port=80

service/nginx exposed

[root@k8s-master01 ~]# kubectl get service -n default

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 1d

nginx NodePort 10.97.112.176 <none> 80:32546/TCP 29s访问测试

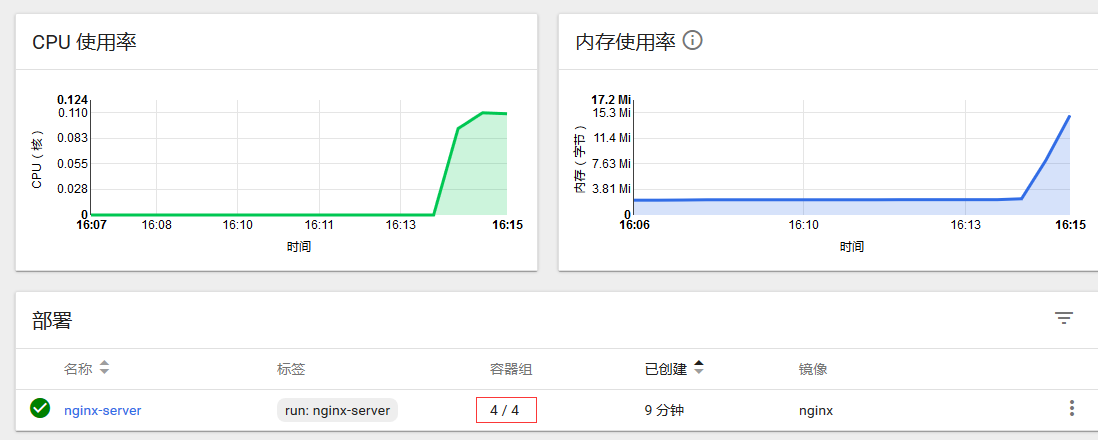

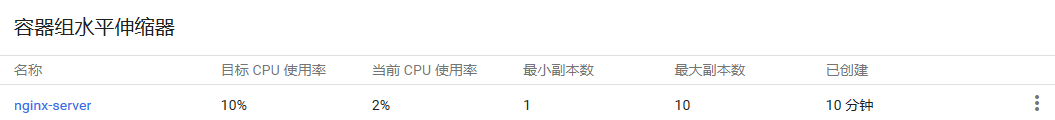

测试HPA自动弹性伸缩

# 创建测试服务

kubectl run nginx-server --requests=cpu=10m --image=nginx --port=80

kubectl expose deployment nginx-server --port=80

# 创建hpa

kubectl autoscale deployment nginx-server --cpu-percent=10 --min=1 --max=10查看当前nginx-server的ClusterIP

[root@k8s-master01 ~]# kubectl get service -n default

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 1d

nginx-server ClusterIP 10.108.160.23 <none> 80/TCP 5m给测试服务增加负载

[root@k8s-master01 ~]# while true; do wget -q -O- http://10.108.160.23 > /dev/null; done查看当前扩容情况

终止增加负载,结束增加负载后,pod自动缩容(自动缩容需要大概10-15分钟)

删除测试数据

[root@k8s-master01 ~]# kubectl delete deploy,svc,hpa nginx-server

deployment.extensions "nginx-server" deleted

service "nginx-server" deleted

horizontalpodautoscaler.autoscaling "nginx-server" deleted

4、集群稳定性测试

关闭master01电源

master02查看

[root@k8s-master02 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 NotReady master 1d v1.11.1

k8s-master02 Ready master 1d v1.11.1

k8s-master03 Ready master 1d v1.11.1

k8s-node01 Ready <none> 22h v1.11.1

k8s-node02 Ready <none> 22h v1.11.1访问测试

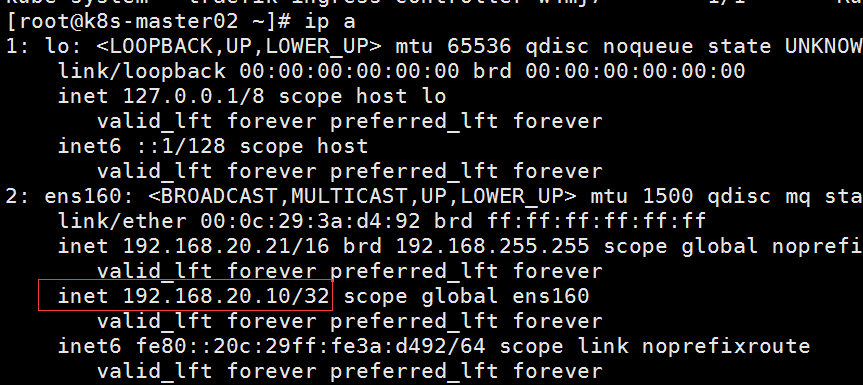

VIP以漂移至master02

关闭集群所有节点(直接断电,非正常关机)

重新开机

查看节点状态

[root@k8s-master01 ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master01 Ready master 2d v1.11.1

k8s-master02 Ready master 2d v1.11.1

k8s-master03 Ready master 2d v1.11.1

k8s-node01 Ready <none> 1d v1.11.1

k8s-node02 Ready <none> 1d v1.11.1查看所有pods

[root@k8s-master01 ~]# kubectl get pods --all-namespaces

NAMESPACE NAME READY STATUS RESTARTS AGE

kube-system calico-node-kwz9t 2/2 Running 2 2d

kube-system calico-node-nfhrd 2/2 Running 2 1d

kube-system calico-node-nxtlf 2/2 Running 2 1d

kube-system calico-node-rj8p8 2/2 Running 2 2d

kube-system calico-node-xfsg5 2/2 Running 2 2d

kube-system coredns-777d78ff6f-dctjh 1/1 Running 1 8h

kube-system coredns-777d78ff6f-ljpqs 1/1 Running 1 8h

kube-system etcd-k8s-master01 1/1 Running 1 6m

kube-system etcd-k8s-master02 1/1 Running 1 21m

kube-system etcd-k8s-master03 1/1 Running 13 2d

kube-system heapster-5874d498f5-rv25x 1/1 Running 1 8h

kube-system kube-apiserver-k8s-master01 1/1 Running 2 6m

kube-system kube-apiserver-k8s-master02 1/1 Running 1 21m

kube-system kube-apiserver-k8s-master03 1/1 Running 8 2d

kube-system kube-controller-manager-k8s-master01 1/1 Running 2 6m

kube-system kube-controller-manager-k8s-master02 1/1 Running 3 21m

kube-system kube-controller-manager-k8s-master03 1/1 Running 2 2d

kube-system kube-proxy-4cjhm 1/1 Running 1 2d

kube-system kube-proxy-kpxhz 1/1 Running 1 1d

kube-system kube-proxy-lkvjk 1/1 Running 3 2d

kube-system kube-proxy-m7htq 1/1 Running 1 2d

kube-system kube-proxy-r4sjs 1/1 Running 1 1d

kube-system kube-scheduler-k8s-master01 1/1 Running 4 6m

kube-system kube-scheduler-k8s-master02 1/1 Running 1 21m

kube-system kube-scheduler-k8s-master03 1/1 Running 5 2d

kube-system kubernetes-dashboard-7954d796d8-2k4hx 1/1 Running 1 1d

kube-system metrics-server-55fcc5b88-bpmkm 1/1 Running 1 1d

kube-system monitoring-influxdb-655cd78874-ccrgl 1/1 Running 1 8h

kube-system prometheus-56dff8579d-28qm5 1/1 Running 1 28m

kube-system traefik-ingress-controller-cv2jg 1/1 Running 1 1d

kube-system traefik-ingress-controller-d7lzw 1/1 Running 1 1d

kube-system traefik-ingress-controller-r2z29 1/1 Running 1 1d

kube-system traefik-ingress-controller-tm6vv 1/1 Running 1 1d

kube-system traefik-ingress-controller-w4mj7 1/1 Running 1 1d访问测试

赞助作者:

来源:oschina

链接:https://my.oschina.net/u/4268952/blog/3298300