这篇往后,会暂时先更ORB、SITF、SURF三篇特征算子,在代码部分,会在本篇介绍下OPENCV特征匹配的特征点KeyPoint、特征描述子和匹配算子Match等的构成。

一、背景:

目前特征匹配算子主要应用于目标追踪、图像匹配等多个方面,效果比较好的有SIFT、SURF、ORB等特征匹配算子,SURF由在SIFT上改进得到。目前暂更ORB、SIFT和SURF三种特征匹配算子。在此,先简要介绍下三种算子,ORB采用FAST进行特征点检测,相对于SIFT和SURF具有运行速度快的优点,OBR算法推出晚于SIFT和SURF算法,运行速度优于SIFT和SURF(网上可以搜到三者运行速度差距,在此不再展示),主要应用于实时图像匹配。SIFT和SURF具有更好的稳定性,SURF是SIFT的改进版本,运行速度和匹配效果均优于SIFT。(在此介绍SIFT,是希望读者了解其中的算法,SIFT在最初的特征匹配上,有比较好的效果。同时希望,读者如果有兴趣,可以继续改进SIFT,万一再研究出个SXXXX,发表论文、申请专利呢???hhhhh)。

二、ORB特征匹配原理:

特征匹配的步骤一般可分为3步:1.检测特征点,2.计算特征点的描述子,3.根据特征点的描述子进行特征点匹配。

ORB特征点检测:

1.ORB在特征点检测部分,采用FAST算法进行特征点检测。以下为FAST检测特征点的原理(和LBP有相似之处):

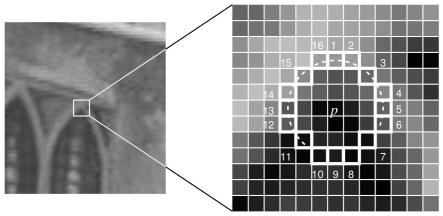

FAST基本原理是通过判断一个点的像素值与其周围园内的像素点的像素值比较,通过判断数量的大小,确定其是否为角点。以下为原理和步骤:

(1)任选一点p,假设其像素值为l;

(2)以r为半径,得到点p周围的m个点,默认r为3,m为16.有图展示。

(3)设置阈值t,在周围16个点中,若有连续的N个像素点的值大于或小于l+t,那么这个点就为角点(这个N可为12或9,道友实验证明9效果可能会更好)。

上面是基本的FAST特征点检测步骤 ,由于图像可能为64*64或128*128或1440*1080等等,按照上述,若对每个像素点如此处理,需要进行大量的计算。后有一种通过排除非角点的算法提高FAST的效率:仅检测像素点周围的1,9,5和13四个位置的像素(先1,后9,后5,后13),若P是一个角点,则至少应有三个大于或小于I+t。若符合,则根据上面步骤进一步判断;不符合,直接pass.

上述FAST特征点匹配仍有一些缺点,如当n小于9的时候,不能通过排除角点的算法提高FAST的效率、检测出的角点并非图像中最优角点、角点可能密集在一部分等。对于上面缺点,FAST可通过机器学习做一个角点分类器的改进,不过在ORB中并未使用,在此不做介绍。如有兴趣,可移步https://www.cnblogs.com/ronny/p/4078710.html。

OBR内FAST的实现:

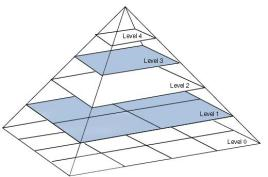

原作者使用FAST算法提取特征点,实验得出FAST-9的算法有更好的效果(FAST-9为连续9个点,FAST-12为连续12个点),由于边缘位置对FAST算法得到的特征有很大影响,作者根据Harris角点检测器把N个关键点进行等级排序,提取前m个关键点,进一步提高获取角点的质量(m根据参数设定)。上述检测出的角点仍不具备尺寸不变性,原作者采取尺度图像金字塔法法,在金字塔每层进行FAST特征点检测和Harris角点检测改进获取角点。

图像金字塔:

图像金字塔是一种多尺度图像的表达,金字塔每一层为图像的不同尺度。由上到下,图像分辨率依次根据一定比例放大。(其中放大算法可根据插值、滤波等等)。以下为金字塔的表示方式:

2.ORB描述子计算:

描述子定义:在得到特征点后,需另计算特征点的描述子,即特征点的特征,为后一步特征匹配提供方法。

描述子的属性:描述子表述图像中角点的属性,用于进行特征匹配,优秀的描述子算法应具备从不同的距离(可理解为图像的尺寸)、角度、光照条件等不变性,即描述子的可复现性。

特征描述子计算部分,ORB采用BRIEF算法计算一个特征点的描述子,以下介绍BRIEF算法(可能读者现在会很惊讶,从检测特征点到描述子计算,ORB均采用已有算法,对此,博主也很无奈,,,但人家就是申请了专利,且现在ORB在实时特征匹配效果上较受欢迎):

BRIEF算法在2010年由一篇《BRIEF:Binary Robust Independent Elementary Features》提出,是一种二进制编码的描述子(和LBP算法的LBP特征有点相识),放弃区域直方图描述特征点的传统方法,加快特征描述子建立的速度,降低特征匹配时间。

BRIEF算法简化可分为两步:

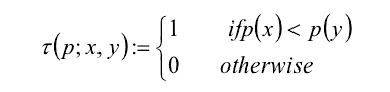

1.以特征点为中心,取SxS的领域窗口,在窗口上通过某种规律选取两个(一对)点p(x)和p(y),比较两者像素的大小,进行如下赋值:

2.在窗口内通过某种规律选取N对点,重复步骤2的赋值方法,形成一个二进制编码(N一般为256),p(x)和p(y)通过选取点顺序得到,具有随机性。

某种规律:原作者测试了以下5中方法,其中方法(2)较好:

ORB对BRIEF的改进:

ORB对BRIEF可分为steer BRIEF和rBRIEF两部分,下面分别做介绍。首先,通过上述FAST检测出的特征点,仍不具备旋转不变形,ORB通过灰度质心方法确定特征点的主方向,并对其进行改进,以下为旋转不变形部分的算法原理。

旋转不变形:

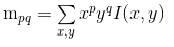

ORB内采用灰度质心法为每个特征点计算主方向。灰度质心法定义:

其中I(x,y)为点(x,y)出的灰度。如下图,为该特征点的质心,其中m10=x1I(x,y),m01=y1I(x,y)

特征点的方向角度定义:θ=atan2(m01,m10) atan2为与象限相关的arctan函数

steered BRIEF(旋转不变性):

假设原始的BRIEF算法在特征点SxS领域内选取n队点,经过角度θ旋转,得到新的点对:

其中Dθ为旋转后的点对,Rθ为旋转矩阵。在新的点集位置上比较点对的大小形成二进制串的描述符。

rBRIEF的改进:

BRIEF描述子的点对是随机生成的,不同的随机序列带来的效果是不一致的。为了找出更优的描述量,我们从大数据集中提取了300k个特征点,对每个特征点使用M个不同的随机点对序列组成不同的向量,并合成一个300k×M300k×M的矩阵,矩阵每一列代表使用点对ppippi取得的二进制数。将这些列向量按照他们的variance从大到小排列。然后使用贪心算法去掉相关度过高的列向量,取得最优的256个向量对应的点对位置。通过这些位置得到的描述量称为rBRIEF描述量。同时,ORB对rBRIEF固定位置的点对进行旋转θ即:

最后,进行匹配。在OPENCV中,ORB、SIFT和SURF的匹配算法均是通过一套封装的算法完成的,在下文进行OPENCV中KeyPoint、匹配算法和匹配算子的介绍(主要根据OPENCV内的代码进行介绍)。

三、代码部分:

首先介绍匹配部分DescriptorMatcher:

OPENCV特征匹配部分通过DescriptorMatcher实现,DescriptorMatcher是匹配器的抽象基类,其具体类有:

class FlannBasedMatcher

class BFMatcher

源代码暂时未找到,不再介绍其内部如何构造,在此仅介绍其使用方法,匹配器的创建有两种方式(建议用1,方便,接口多):

一:

static Ptr<DescriptorMatcher> create(const string& descriptorMatcherType)

如: cv::Ptr<cv::DescriptorMatcher> matcher = cv::DescriptorMatcher::create("FlannBased");

二:

DescriptorMatcher *pMatcher = newBFMatcher;

DescriptorMatcher的接口函数有以下几种(对于一般的使用,仅crete()和match()两个函数足可):

create():创建匹配器,支持的匹配器类型有:BruteForce 、BruteForce、BruteForce-Hamming、BruteForce-Hamming(2)和FlannBased (博主目前使用FlannBased,效果还可,其它暂未尝试)

match(desL,desR,match) :通过描述子进行匹配,desL和desR为两个Mat类型的描述子,match为DMatch类型的vector,下面会介绍DMatch类型

knnMatch();找到最好的k个匹配

add();添加匹配器,用来连接匹配器集合,若匹配器集合不是空的,新加的匹配器会加到集合的后面。

getTrainDescriptors();获得匹配器集合

isMaskSupported();匹配器是否支持掩模,使得话就返回ture

radiusMatch();找到距离不超过规定的匹配

介绍DMatch类型:

简化版(针对于不想看下面源代码的人):DMatch包含四个有用信息,queryIdx(要匹配的描述子的索引(序列)),trainIdx(被匹配的描述子索引(序列)),imageIdx(匹配图像的索引)distance表示两个描述子之间的距离,越小匹配效果越好。queryIdx为match()函数第一个参数描述子对应图像的关键点的索引,trainIdx为第二个参数描述子对应图像的关键点的索引。imageIdx仅在多张图像间进行匹配时,用于表示匹图像的序列。请注意,queryIdx为要匹配,trainIdx为被匹配。下面有实战展示如何使用。/*

* DMatch主要用来储存匹配信息的结构体,query是要匹配的描述子,train是被匹配的描述子,在Opencv中进行匹配时

* void DescriptorMatcher::match( const Mat& queryDescriptors, const Mat& trainDescriptors, vector<DMatch>& matches, const Mat& mask ) const

* match函数的参数中位置在前面的为query descriptor,后面的是 train descriptor

* 例如:query descriptor的数目为20,train descriptor数目为30,则DescriptorMatcher::match后的vector<DMatch>的size为20

* 若反过来,则vector<DMatch>的size为30

*/

struct CV_EXPORTS_W_SIMPLE DMatch

{

//默认构造函数,FLT_MAX是无穷大

//#define FLT_MAX 3.402823466e+38F /* max value */

CV_WRAP DMatch() : queryIdx(-1), trainIdx(-1), imgIdx(-1), distance(FLT_MAX) {}

//DMatch构造函数

CV_WRAP DMatch( int _queryIdx, int _trainIdx, float _distance ) :

queryIdx(_queryIdx), trainIdx(_trainIdx), imgIdx(-1), distance(_distance) {}

//DMatch构造函数

CV_WRAP DMatch( int _queryIdx, int _trainIdx, int _imgIdx, float _distance ) :

queryIdx(_queryIdx), trainIdx(_trainIdx), imgIdx(_imgIdx), distance(_distance) {}

//queryIdx为query描述子的索引,match函数中前面的那个描述子

CV_PROP_RW int queryIdx; // query descriptor index

//trainIdx为train描述子的索引,match函数中后面的那个描述子

CV_PROP_RW int trainIdx; // train descriptor index

//imgIdx为进行匹配图像的索引

//例如已知一幅图像的sift描述子,与其他十幅图像的描述子进行匹配,找最相似的图像,则imgIdx此时就有用了。

CV_PROP_RW int imgIdx; // train image index

//distance为两个描述子之间的距离

CV_PROP_RW float distance;

//DMatch比较运算符重载,比较的是DMatch中的distance,小于为true,否则为false

// less is better

bool operator<( const DMatch &m ) const

{

return distance < m.distance;

}

};

介绍KeyPoint类型(未找到文本样式,盗几张图):

简化版(懒人通道):KeyPoint主要使用的类型有pt(二维坐标点),size(该关键点领域直径大小),angle(关键点的方向:0~360°),response(关键点的相应强度,可用于排序),octve(关键点的金字塔层数),class_id(当要对图片进行分类时,用class_id对每个关键点进行区分,默认为-1。暂时不使用,使用时你也就已明白它的含义。),

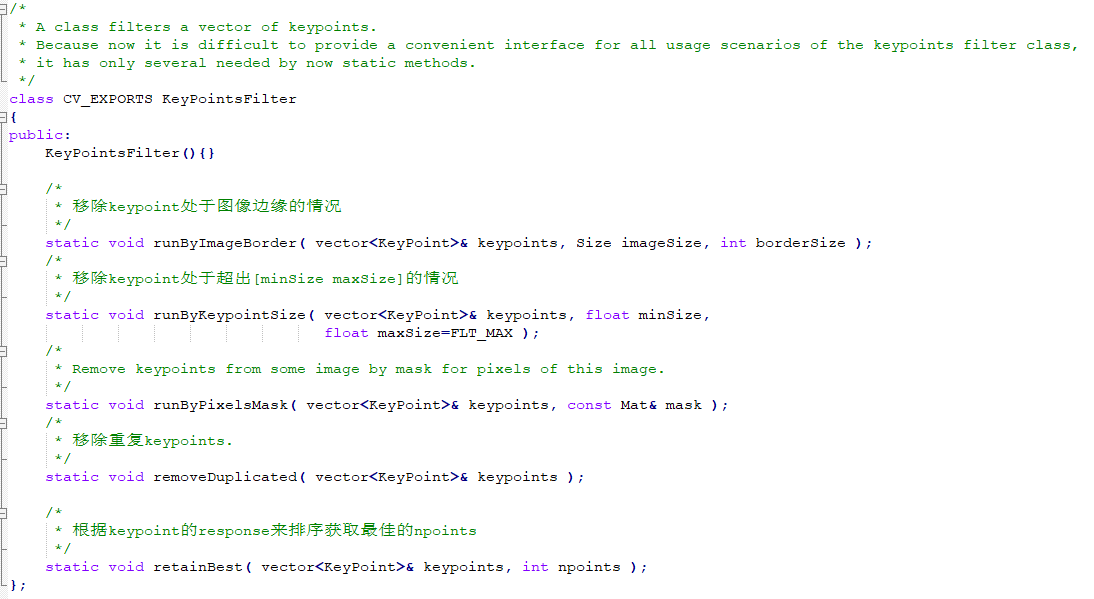

KeyPointsFilter类型(属于对KeyPoint处理的封装好的类,已在算法内实现。一般仅在自己想写特征匹配算法时,才会用到这些):

KeyPointsFilter包含5个功能函数:分别为runByImageBorder()、runByKeyPointSzie()、runByPixelsMask()、removeDuplicated()、retainBest()。其源代码结构如下:

ORB API:

分为三部分:

cv::Ptr<cv::ORB> orb = cv::ORB::create(50); //创建orb对象,后为得到的特征点数目

std::vector<cv::KeyPoint> keyPointL, keyPointR; //创建关键点对象

orb->detect(imageR, keyPointR); //关键点检测

orb->detect(imageL, keyPointL); //关键点检测

cv::Mat despL,despR;//创建描述子

orb->compute(imageR, cv::Mat(),keyPointR,despL);//cv::Mat()表示无roi区域 计算描述子

orb->detect(imageL, cv::Mat(), keyPointL,despR); //cv::Mat()表示无roi区域 计算描述子

orb->detectAndCompute(imageL,cv::Mat(),KeyPointL,despL); //进行检测和计算,相当于上面detect和compute两步 orb->detectAndCompute(imageR,cv::Mat(),KeyPointR,despR);

std::vector<cv::DMatch> matches;

cv::Ptr<cv::DescriptorMatcher> matcher=cv::DescriptorMatcher::create("FlannBased"); //进行匹配

matcher->match(despL, despR, matches);

std::vector point_l,std::vector point_r;

for(int i=0;i<matches.size();i++) //得到匹配点

{

point_l.push_back(matches[i].queryIdx);

point_r.push_back(matches[i].trainIdx);

}

实战展示:

#include <opencv2/opencv.hpp>#include <opencv2/xfeatures2d.hpp>int main(){ cv::Mat imageL = cv::imread(".I/like/Study.png"); //图片位置 cv::Mat imageR = cv::imread("./Study/make/me/happy.png");//图片2位置 cv::cvtColor(imageL,imageL,cv::COLOR_BGR2GRAY); cv::cvtColor(imageR,imageR,cv::COLOR_BGR2GRAY); cv::resize(imageL,imageL,cv::Size(120,90)); cv::resize(imageR,imageR,cv::Size(120,90));// ORB cv::Ptr<cv::ORB> orb = cv::ORB::create(50);//特征点 std::vector<cv::KeyPoint> keyPointL, keyPointR; //单独提取特征点 orb->detect(imageL, keyPointL); orb->detect(imageR, keyPointR); //画特征点 cv::Mat keyPointImageL; cv::Mat keyPointImageR; drawKeypoints(imageL, keyPointL, keyPointImageL, cv::Scalar::all(-1), cv::DrawMatchesFlags::DRAW_RICH_KEYPOINTS); drawKeypoints(imageR, keyPointR, keyPointImageR, cv::Scalar::all(-1), cv::DrawMatchesFlags::DRAW_RICH_KEYPOINTS); //显示窗口 cv::namedWindow("KeyPoints of imageL"); cv::namedWindow("KeyPoints of imageR"); //显示特征点 cv::imshow("KeyPoints of imageL", keyPointImageL); cv::imshow("KeyPoints of imageR", keyPointImageR); //特征点匹配 cv::Mat despL, despR; //提取特征点并计算特征描述子 orb->detectAndCompute(imageL, cv::Mat(), keyPointL, despL); orb->detectAndCompute(imageR, cv::Mat(), keyPointR, despR); std::vector<cv::DMatch> matches; //如果采用flannBased方法 那么 desp通过orb的到的类型不同需要先转换类型 if (despL.type() != CV_32F || despR.type() != CV_32F) { despL.convertTo(despL, CV_32F); despR.convertTo(despR, CV_32F); } cv::Ptr<cv::DescriptorMatcher> matcher = cv::DescriptorMatcher::create("FlannBased"); matcher->match(despL, despR, matches);// //计算特征点距离的最大值 double maxDist = 0; for (int i = 0; i < despL.rows; i++) { double dist = matches[i].distance; if (dist > maxDist) maxDist = dist; } //挑选好的匹配点 std::vector< cv::DMatch > good_matches; int size = matches.size(); for (int i = 0; i < despL.rows; i++) { int low = 0; for(int n=0;n<despL.rows;n++) { if(matches[i].distance<matches[n].distance) { low+=1; } } if(low>=size-15) { good_matches.push_back(matches[i]); } } cv::Mat imageOutput; cv::drawMatches(imageL, keyPointL, imageR, keyPointR, good_matches, imageOutput); cv::namedWindow("picture of matching",cv::WINDOW_NORMAL); cv::imshow("picture of matching", imageOutput); cv::waitKey(0); return 0;}

ORB源代码:

CV_EXPORTS_W ORB : Feature2D

{

:

{ kBytes = 32, HARRIS_SCORE=0, FAST_SCORE=1 };

CV_WRAP ORB( nfeatures = 500, scaleFactor = 1.2f, nlevels = 8, edgeThreshold = 31,

firstLevel = 0, WTA_K=2, scoreType=ORB::HARRIS_SCORE, patchSize=31 );

descriptorSize() ;

descriptorType() ;

operator()(InputArray image, InputArray mask, vector<KeyPoint>& keypoints) ;

operator()( InputArray image, InputArray mask, vector<KeyPoint>& keypoints,

OutputArray descriptors, useProvidedKeypoints= ) ;

AlgorithmInfo* info() ;

:

computeImpl( Mat& image, vector<KeyPoint>& keypoints, Mat& descriptors ) ;

detectImpl( Mat& image, vector<KeyPoint>& keypoints, Mat& mask=Mat() ) ;

CV_PROP_RW nfeatures;

CV_PROP_RW scaleFactor;

CV_PROP_RW nlevels;

CV_PROP_RW edgeThreshold;

CV_PROP_RW firstLevel;

CV_PROP_RW WTA_K;

CV_PROP_RW scoreType;

CV_PROP_RW patchSize;

};

/** Compute the ORB features and descriptors on an image

* @param keypoints the resulting keypoints

* @param do_keypoints if true, the keypoints are computed, otherwise used as an input

*/ ORB::operator()( InputArray _image, InputArray _mask, vector<KeyPoint>& _keypoints,

OutputArray _descriptors, useProvidedKeypoints)

{

CV_Assert(patchSize >= 2);

do_keypoints = !useProvidedKeypoints;

do_descriptors = _descriptors.needed();

( (!do_keypoints && !do_descriptors) || _image.empty() )

;

HARRIS_BLOCK_SIZE = 9;

halfPatchSize = patchSize / 2;.

border = std::max(edgeThreshold, std::max(halfPatchSize, HARRIS_BLOCK_SIZE/2))+1;

Mat image = _image.getMat(), mask = _mask.getMat();

( image.type() != CV_8UC1 )

cvtColor(_image, image, CV_BGR2GRAY);

levelsNum = ->nlevels;

( !do_keypoints )

{

levelsNum = 0;

( i = 0; i < _keypoints.size(); i++ )

levelsNum = std::max(levelsNum, std::max(_keypoints[i].octave, 0));

levelsNum++;

}

vector<Mat> imagePyramid(levelsNum), maskPyramid(levelsNum);

( level = 0; level < levelsNum; ++level)

{

scale = 1/getScale(level, firstLevel, scaleFactor);

static inline float getScale(int level, int firstLevel, double scaleFactor)

return (float)std::pow(scaleFactor, (double)(level - firstLevel));

*/ Size sz(cvRound(image.cols*scale), cvRound(image.rows*scale));

Size wholeSize(sz.width + border*2, sz.height + border*2);

Mat temp(wholeSize, image.type()), masktemp;

imagePyramid[level] = temp(Rect(border, border, sz.width, sz.height));

( !mask.empty() )

{

masktemp = Mat(wholeSize, mask.type());

maskPyramid[level] = masktemp(Rect(border, border, sz.width, sz.height));

}

( level != firstLevel )

{

( level < firstLevel )

{

resize(image, imagePyramid[level], sz, 0, 0, INTER_LINEAR);

(!mask.empty())

resize(mask, maskPyramid[level], sz, 0, 0, INTER_LINEAR);

}

{

resize(imagePyramid[level-1], imagePyramid[level], sz, 0, 0, INTER_LINEAR);

(!mask.empty())

{

resize(maskPyramid[level-1], maskPyramid[level], sz, 0, 0, INTER_LINEAR);

threshold(maskPyramid[level], maskPyramid[level], 254, 0, THRESH_TOZERO);

}

}

copyMakeBorder(imagePyramid[level], temp, border, border, border, border,

BORDER_REFLECT_101+BORDER_ISOLATED);

(!mask.empty())

copyMakeBorder(maskPyramid[level], masktemp, border, border, border, border,

BORDER_CONSTANT+BORDER_ISOLATED);

}

{

copyMakeBorder(image, temp, border, border, border, border,

BORDER_REFLECT_101);

( !mask.empty() )

copyMakeBorder(mask, masktemp, border, border, border, border,

BORDER_CONSTANT+BORDER_ISOLATED);

}

}

vector < vector<KeyPoint> > allKeypoints;

( do_keypoints )

{

computeKeyPoints(imagePyramid, maskPyramid, allKeypoints,

nfeatures, firstLevel, scaleFactor,

edgeThreshold, patchSize, scoreType);

for (int level = 0; level < n_levels; ++level)

vector<KeyPoint>& keypoints = all_keypoints[level];

keypoints.clear();

keypoint_end = temp.end(); keypoint != keypoint_end; ++keypoint)

}

{

KeyPointsFilter::runByImageBorder(_keypoints, image.size(), edgeThreshold);

allKeypoints.resize(levelsNum);

(vector<KeyPoint>::iterator keypoint = _keypoints.begin(),

keypointEnd = _keypoints.end(); keypoint != keypointEnd; ++keypoint)

allKeypoints[keypoint->octave].push_back(*keypoint);

( level = 0; level < levelsNum; ++level)

{

(level == firstLevel)

;

vector<KeyPoint> & keypoints = allKeypoints[level];

scale = 1/getScale(level, firstLevel, scaleFactor);

(vector<KeyPoint>::iterator keypoint = keypoints.begin(),

keypointEnd = keypoints.end(); keypoint != keypointEnd; ++keypoint)

keypoint->pt *= scale;

}

}

Mat descriptors;

vector<Point> pattern;

( do_descriptors )

{

nkeypoints = 0;

( level = 0; level < levelsNum; ++level)

nkeypoints += ()allKeypoints[level].size();

( nkeypoints == 0 )

_descriptors.release();

{

_descriptors.create(nkeypoints, descriptorSize(), CV_8U);

descriptors = _descriptors.getMat();

}

npoints = 512;

Point patternbuf[npoints];

Point* pattern0 = ( Point*)bit_pattern_31_;

( patchSize != 31 )

{

pattern0 = patternbuf;

makeRandomPattern(patchSize, patternbuf, npoints);

}

CV_Assert( WTA_K == 2 || WTA_K == 3 || WTA_K == 4 );

( WTA_K == 2 )

std::copy(pattern0, pattern0 + npoints, std::back_inserter(pattern));

{

ntuples = descriptorSize()*4;

initializeOrbPattern(pattern0, pattern, ntuples, WTA_K, npoints);

}

}

_keypoints.clear();

offset = 0;

( level = 0; level < levelsNum; ++level)

{

vector<KeyPoint>& keypoints = allKeypoints[level];

nkeypoints = ()keypoints.size();

(do_descriptors)

{

Mat desc;

(!descriptors.empty())

{

desc = descriptors.rowRange(offset, offset + nkeypoints);

}

offset += nkeypoints;

Mat& workingMat = imagePyramid[level];

GaussianBlur(workingMat, workingMat, Size(7, 7), 2, 2, BORDER_REFLECT_101);

computeDescriptors(workingMat, keypoints, desc, pattern, descriptorSize(), WTA_K);

}

(level != firstLevel)

{

scale = getScale(level, firstLevel, scaleFactor);

(vector<KeyPoint>::iterator keypoint = keypoints.begin(),

keypointEnd = keypoints.end(); keypoint != keypointEnd; ++keypoint)

keypoint->pt *= scale;

}

_keypoints.insert(_keypoints.end(), keypoints.begin(), keypoints.end());

}

}

/** Compute the ORB keypoints on an image

* @param keypoints the resulting keypoints, clustered per level

computeKeyPoints( vector<Mat>& imagePyramid,

vector<Mat>& maskPyramid,

vector<vector<KeyPoint> >& allKeypoints,

nfeatures, firstLevel, scaleFactor,

edgeThreshold, patchSize, scoreType )

{

nlevels = ()imagePyramid.size();

vector<> nfeaturesPerLevel(nlevels);

factor = ()(1.0 / scaleFactor);

ndesiredFeaturesPerScale = nfeatures*(1 - factor)/(1 - ()pow(()factor, ()nlevels));

sumFeatures = 0;

( level = 0; level < nlevels-1; level++ )

{

nfeaturesPerLevel[level] = cvRound(ndesiredFeaturesPerScale);

sumFeatures += nfeaturesPerLevel[level];

ndesiredFeaturesPerScale *= factor;

}

nfeaturesPerLevel[nlevels-1] = std::max(nfeatures - sumFeatures, 0);

halfPatchSize = patchSize / 2;

vector<> umax(halfPatchSize + 2);

v, v0, vmax = cvFloor(halfPatchSize * sqrt(2.f) / 2 + 1);

vmin = cvCeil(halfPatchSize * sqrt(2.f) / 2);

(v = 0; v <= vmax; ++v)

umax[v] = cvRound(sqrt(()halfPatchSize * halfPatchSize - v * v));

(v = halfPatchSize, v0 = 0; v >= vmin; --v)

{

(umax[v0] == umax[v0 + 1])

++v0;

umax[v] = v0;

++v0;

}

allKeypoints.resize(nlevels);

( level = 0; level < nlevels; ++level)

{

featuresNum = nfeaturesPerLevel[level];

allKeypoints[level].reserve(featuresNum*2);

vector<KeyPoint> & keypoints = allKeypoints[level];

FastFeatureDetector fd(20, );

fd.detect(imagePyramid[level], keypoints, maskPyramid[level]);

KeyPointsFilter::runByImageBorder(keypoints, imagePyramid[level].size(), edgeThreshold);

( scoreType == ORB::HARRIS_SCORE )

{

KeyPointsFilter::retainBest(keypoints, 2 * featuresNum);

HarrisResponses(imagePyramid[level], keypoints, 7, HARRIS_K);

}

KeyPointsFilter::retainBest(keypoints, featuresNum);

sf = getScale(level, firstLevel, scaleFactor);

(vector<KeyPoint>::iterator keypoint = keypoints.begin(),

keypointEnd = keypoints.end(); keypoint != keypointEnd; ++keypoint)

{

keypoint->octave = level;

keypoint->size = patchSize*sf;

}

computeOrientation(imagePyramid[level], keypoints, halfPatchSize, umax);

}

}

static computeOrientation( Mat& image, vector<KeyPoint>& keypoints,

halfPatchSize, vector<>& umax)

{

(vector<KeyPoint>::iterator keypoint = keypoints.begin(),

keypointEnd = keypoints.end(); keypoint != keypointEnd; ++keypoint)

{

keypoint->angle = IC_Angle(image, halfPatchSize, keypoint->pt, umax);

}

}

static IC_Angle( Mat& image, half_k, Point2f pt,

vector<> & u_max)

{

m_01 = 0, m_10 = 0;

uchar* center = &image.at<uchar> (cvRound(pt.y), cvRound(pt.x));

( u = -half_k; u <= half_k; ++u)

m_10 += u * center[u];

step = ()image.step1();

( v = 1; v <= half_k; ++v)

{

v_sum = 0;

d = u_max[v];

( u = -d; u <= d; ++u)

{

val_plus = center[u + v*step], val_minus = center[u - v*step];

v_sum += (val_plus - val_minus);

m_10 += u * (val_plus + val_minus);

}

m_01 += v * v_sum;

}

fastAtan2(()m_01, ()m_10);

}

static computeDescriptors( Mat& image, vector<KeyPoint>& keypoints, Mat& descriptors,

vector<Point>& pattern, dsize, WTA_K)

{

CV_Assert(image.type() == CV_8UC1);

descriptors = Mat::zeros(()keypoints.size(), dsize, CV_8UC1);

( i = 0; i < keypoints.size(); i++)

computeOrbDescriptor(keypoints[i], image, &pattern[0], descriptors.ptr(()i), dsize, WTA_K);

}

static computeOrbDescriptor( KeyPoint& kpt,

Mat& img, Point* pattern,

uchar* desc, dsize, WTA_K)

{

angle = kpt.angle;

angle *= ()(CV_PI/180.f);

a = ()cos(angle), b = ()sin(angle);

uchar* center = &img.at<uchar>(cvRound(kpt.pt.y), cvRound(kpt.pt.x));

step = ()img.step;

center[cvRound(pattern[idx].x*b + pattern[idx].y*a)*step + \

cvRound(pattern[idx].x*a - pattern[idx].y*b)]

x, y;

ix, iy;

(x = pattern[idx].x*a - pattern[idx].y*b, \

y = pattern[idx].x*b + pattern[idx].y*a, \

ix = cvFloor(x), iy = cvFloor(y), \

x -= ix, y -= iy, \

cvRound(center[iy*step + ix]*(1-x)*(1-y) + center[(iy+1)*step + ix]*(1-x)*y + \

center[iy*step + ix+1]*x*(1-y) + center[(iy+1)*step + ix+1]*x*y))

( WTA_K == 2 )

{

( i = 0; i < dsize; ++i, pattern += 16)

{

t0, t1, val;

t0 = GET_VALUE(0); t1 = GET_VALUE(1);

val = t0 < t1;

t0 = GET_VALUE(2); t1 = GET_VALUE(3);

val |= (t0 < t1) << 1;

t0 = GET_VALUE(4); t1 = GET_VALUE(5);

val |= (t0 < t1) << 2;

t0 = GET_VALUE(6); t1 = GET_VALUE(7);

val |= (t0 < t1) << 3;

t0 = GET_VALUE(8); t1 = GET_VALUE(9);

val |= (t0 < t1) << 4;

t0 = GET_VALUE(10); t1 = GET_VALUE(11);

val |= (t0 < t1) << 5;

t0 = GET_VALUE(12); t1 = GET_VALUE(13);

val |= (t0 < t1) << 6;

t0 = GET_VALUE(14); t1 = GET_VALUE(15);

val |= (t0 < t1) << 7;

desc[i] = (uchar)val;

}

}

( WTA_K == 3 )

{

( i = 0; i < dsize; ++i, pattern += 12)

{

t0, t1, t2, val;

t0 = GET_VALUE(0); t1 = GET_VALUE(1); t2 = GET_VALUE(2);

val = t2 > t1 ? (t2 > t0 ? 2 : 0) : (t1 > t0);

t0 = GET_VALUE(3); t1 = GET_VALUE(4); t2 = GET_VALUE(5);

val |= (t2 > t1 ? (t2 > t0 ? 2 : 0) : (t1 > t0)) << 2;

t0 = GET_VALUE(6); t1 = GET_VALUE(7); t2 = GET_VALUE(8);

val |= (t2 > t1 ? (t2 > t0 ? 2 : 0) : (t1 > t0)) << 4;

t0 = GET_VALUE(9); t1 = GET_VALUE(10); t2 = GET_VALUE(11);

val |= (t2 > t1 ? (t2 > t0 ? 2 : 0) : (t1 > t0)) << 6;

desc[i] = (uchar)val;

}

}

( WTA_K == 4 )

{

( i = 0; i < dsize; ++i, pattern += 16)

{

t0, t1, t2, t3, u, v, k, val;

t0 = GET_VALUE(0); t1 = GET_VALUE(1);

t2 = GET_VALUE(2); t3 = GET_VALUE(3);

u = 0, v = 2;

( t1 > t0 ) t0 = t1, u = 1;

( t3 > t2 ) t2 = t3, v = 3;

k = t0 > t2 ? u : v;

val = k;

t0 = GET_VALUE(4); t1 = GET_VALUE(5);

t2 = GET_VALUE(6); t3 = GET_VALUE(7);

u = 0, v = 2;

( t1 > t0 ) t0 = t1, u = 1;

( t3 > t2 ) t2 = t3, v = 3;

k = t0 > t2 ? u : v;

val |= k << 2;

t0 = GET_VALUE(8); t1 = GET_VALUE(9);

t2 = GET_VALUE(10); t3 = GET_VALUE(11);

u = 0, v = 2;

( t1 > t0 ) t0 = t1, u = 1;

( t3 > t2 ) t2 = t3, v = 3;

k = t0 > t2 ? u : v;

val |= k << 4;

t0 = GET_VALUE(12); t1 = GET_VALUE(13);

t2 = GET_VALUE(14); t3 = GET_VALUE(15);

u = 0, v = 2;

( t1 > t0 ) t0 = t1, u = 1;

( t3 > t2 ) t2 = t3, v = 3;

k = t0 > t2 ? u : v;

val |= k << 6;

desc[i] = (uchar)val;

}

}

CV_Error( CV_StsBadSize,

}

如有错误,欢迎指正和批评。有部分参考,因忘记记录,未标注下面,抱歉。

参考:

https://blog.csdn.net/Small_Munich/article/details/80866162

https://www.cnblogs.com/TransTown/p/7396996.html

http://docs.opencv.org/3.3.0/d2/d29/classcv_1_1KeyPoint.html

https://blog.csdn.net/Darlingqiang/article/details/79404869

https://blog.csdn.net/frozenspring/article/details/78146076

https://blog.csdn.net/zcg1942/article/details/83824367

https://www.cnblogs.com/wyuzl/p/7856863.html

来源:https://www.cnblogs.com/urglyfish/p/12459650.html