如何去创建项目这里就不对讲了,可以参考 :https://www.cnblogs.com/braveym/p/12214367.html

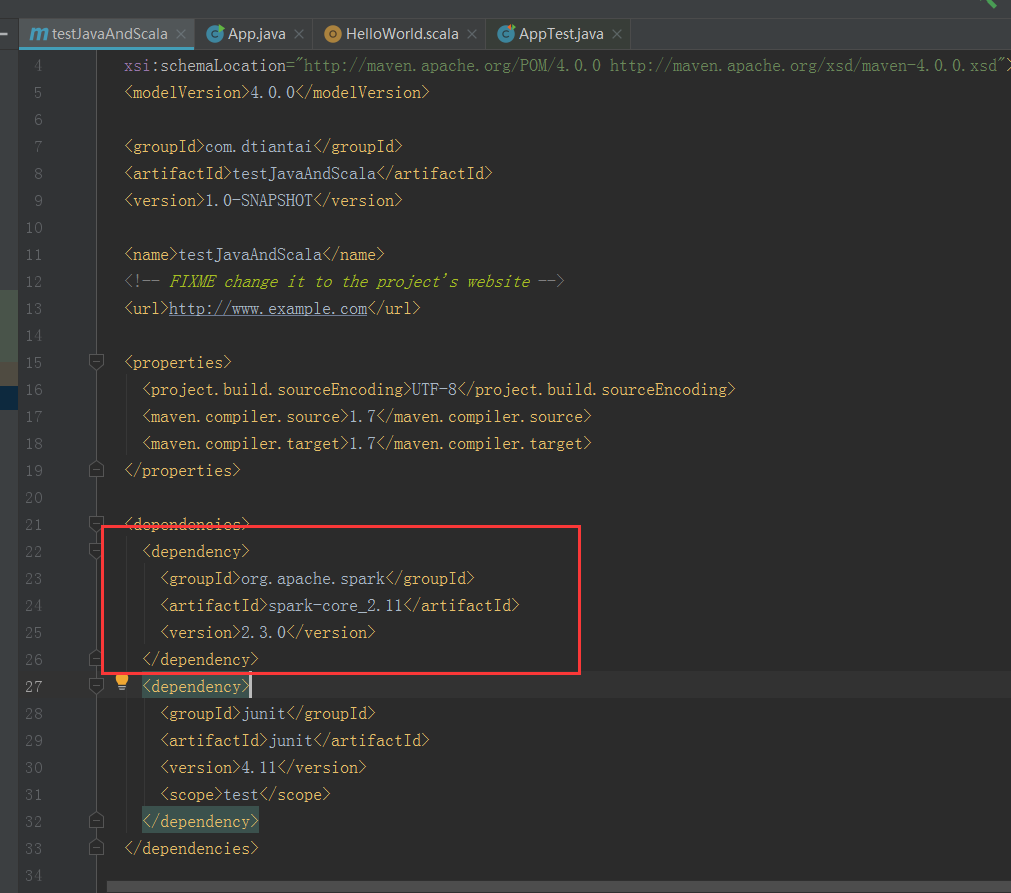

先在pom.xml文件里面添加spark依赖包

<dependency>

<groupId>org.apache.spark</groupId>

<artifactId>spark-core_2.11</artifactId>

<version>2.3.0</version>

</dependency>

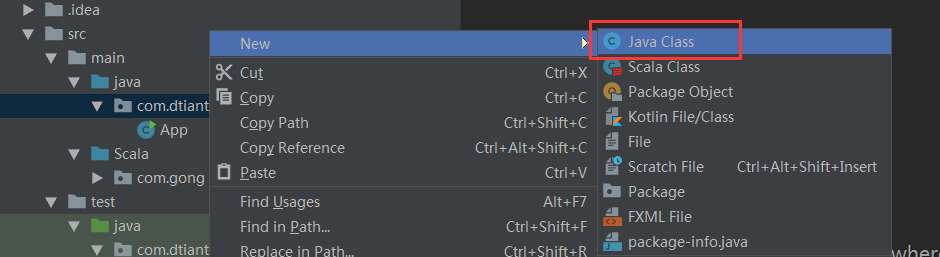

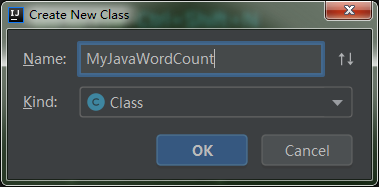

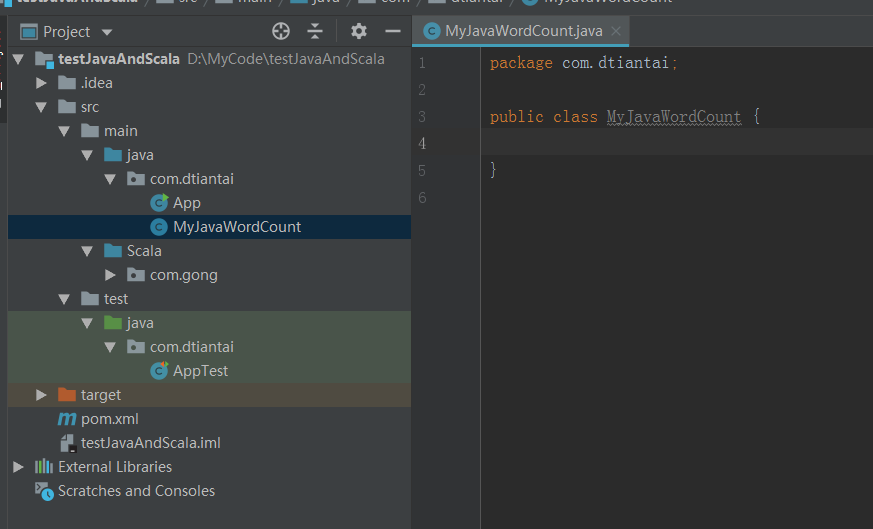

新建一个java类

编写代码

package com.dtiantai;

import org.apache.spark.SparkConf;

import org.apache.spark.api.java.JavaPairRDD;

import org.apache.spark.api.java.JavaRDD;

import org.apache.spark.api.java.JavaSparkContext;

import org.apache.spark.api.java.function.FlatMapFunction;

import org.apache.spark.api.java.function.Function2;

import org.apache.spark.api.java.function.PairFunction;

import scala.Tuple2;

import java.util.Arrays;

import java.util.Iterator;

public class MyJavaWordCount {

public static void main(String[] args) {

//参数检测

if(args.length>2){

System.err.println("Usage: MyJavaWordCount <input> <output>");

System.exit(1);

}

//创建sparkconf

SparkConf conf = new SparkConf().setAppName("MyJavaWordCount");

conf.setMaster("local[2]");

JavaSparkContext sc=new JavaSparkContext(conf);

//读取数据

JavaRDD<String> inputRDD = sc.textFile(args[0]);

//进行相关计算

JavaRDD<String> words=inputRDD.flatMap(new FlatMapFunction<String, String>() {

@Override

public Iterator<String> call(String s) throws Exception {

return Arrays.asList(s.split("\\s+")).iterator();

}

});

JavaPairRDD<String,Integer> result= words.mapToPair(new PairFunction<String, String, Integer>() {

@Override

public Tuple2<String, Integer> call(String s) throws Exception {

return new Tuple2<>(s, 1);

}

}).reduceByKey(new Function2<Integer, Integer, Integer>() {

@Override

public Integer call(Integer x, Integer y) throws Exception {

return x+y;

}

});

//保存结果

result.saveAsTextFile(args[1]);

//关闭sc

sc.stop();

}

}

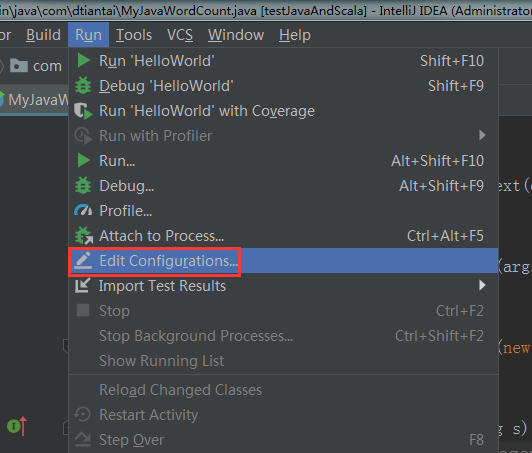

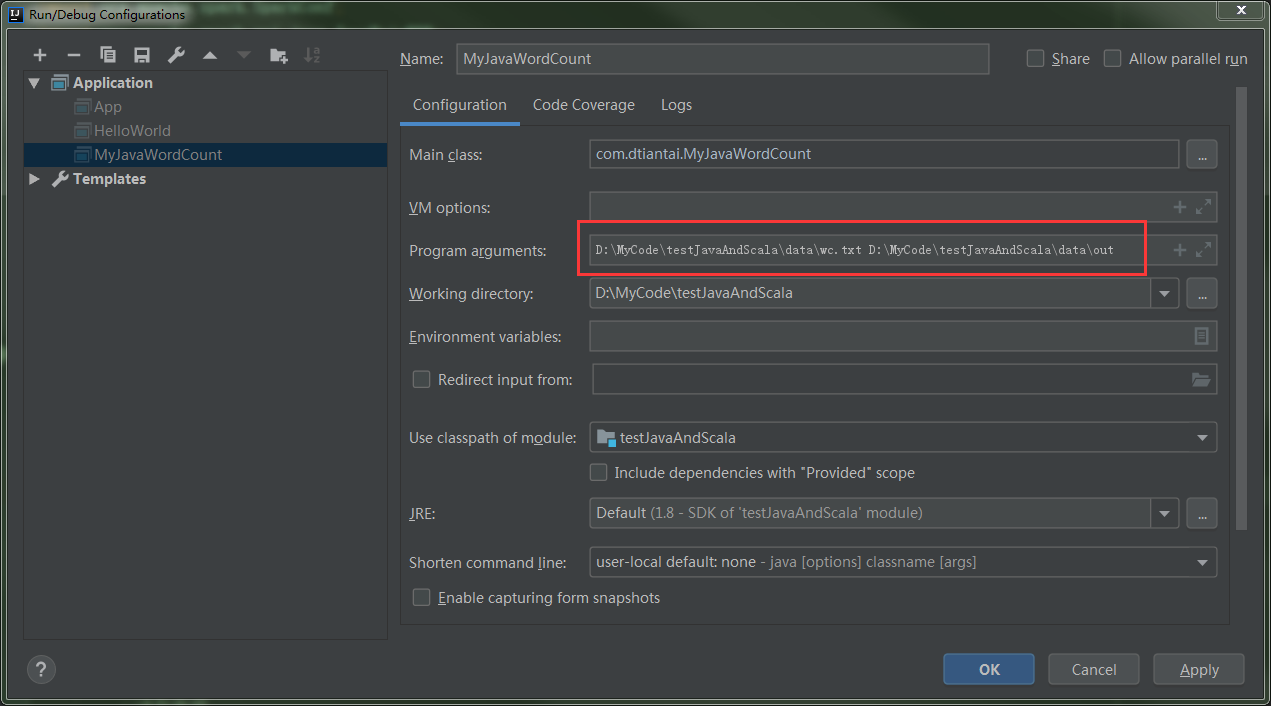

配置输入输出目录

运行程序

D:\SoftWare\JDK8\bin\java.exe "-javaagent:D:\SoftWare\IDEA\IntelliJ IDEA 2019.1.3\lib\idea_rt.jar=52468:D:\SoftWare\IDEA\IntelliJ IDEA 2019.1.3\bin" -Dfile.encoding=UTF-8 -classpath D:\SoftWare\JDK8\jre\lib\charsets.jar;D:\SoftWare\JDK8\jre\lib\deploy.jar;D:\SoftWare\JDK8\jre\lib\ext\access-bridge-64.jar;D:\SoftWare\JDK8\jre\lib\ext\cldrdata.jar;D:\SoftWare\JDK8\jre\lib\ext\dnsns.jar;D:\SoftWare\JDK8\jre\lib\ext\jaccess.jar;D:\SoftWare\JDK8\jre\lib\ext\jfxrt.jar;D:\SoftWare\JDK8\jre\lib\ext\localedata.jar;D:\SoftWare\JDK8\jre\lib\ext\nashorn.jar;D:\SoftWare\JDK8\jre\lib\ext\sunec.jar;D:\SoftWare\JDK8\jre\lib\ext\sunjce_provider.jar;D:\SoftWare\JDK8\jre\lib\ext\sunmscapi.jar;D:\SoftWare\JDK8\jre\lib\ext\sunpkcs11.jar;D:\SoftWare\JDK8\jre\lib\ext\zipfs.jar;D:\SoftWare\JDK8\jre\lib\javaws.jar;D:\SoftWare\JDK8\jre\lib\jce.jar;D:\SoftWare\JDK8\jre\lib\jfr.jar;D:\SoftWare\JDK8\jre\lib\jfxswt.jar;D:\SoftWare\JDK8\jre\lib\jsse.jar;D:\SoftWare\JDK8\jre\lib\management-agent.jar;D:\SoftWare\JDK8\jre\lib\plugin.jar;D:\SoftWare\JDK8\jre\lib\resources.jar;D:\SoftWare\JDK8\jre\lib\rt.jar;D:\MyCode\testJavaAndScala\target\classes;D:\SoftWare\Scala\lib\scala-actors-2.11.0.jar;D:\SoftWare\Scala\lib\scala-actors-migration_2.11-1.1.0.jar;D:\SoftWare\Scala\lib\scala-library.jar;D:\SoftWare\Scala\lib\scala-parser-combinators_2.11-1.0.1.jar;D:\SoftWare\Scala\lib\scala-reflect.jar;D:\SoftWare\Scala\lib\scala-swing_2.11-1.0.1.jar;D:\SoftWare\Scala\lib\scala-xml_2.11-1.0.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-core_2.11\2.3.0\spark-core_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\avro\avro\1.7.7\avro-1.7.7.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\codehaus\jackson\jackson-core-asl\1.9.13\jackson-core-asl-1.9.13.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\codehaus\jackson\jackson-mapper-asl\1.9.13\jackson-mapper-asl-1.9.13.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\thoughtworks\paranamer\paranamer\2.3\paranamer-2.3.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\commons\commons-compress\1.4.1\commons-compress-1.4.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\tukaani\xz\1.0\xz-1.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\avro\avro-mapred\1.7.7\avro-mapred-1.7.7-hadoop2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\avro\avro-ipc\1.7.7\avro-ipc-1.7.7.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\avro\avro-ipc\1.7.7\avro-ipc-1.7.7-tests.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\twitter\chill_2.11\0.8.4\chill_2.11-0.8.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\esotericsoftware\kryo-shaded\3.0.3\kryo-shaded-3.0.3.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\esotericsoftware\minlog\1.3.0\minlog-1.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\objenesis\objenesis\2.1\objenesis-2.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\twitter\chill-java\0.8.4\chill-java-0.8.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\xbean\xbean-asm5-shaded\4.4\xbean-asm5-shaded-4.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-client\2.6.5\hadoop-client-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-common\2.6.5\hadoop-common-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-cli\commons-cli\1.2\commons-cli-1.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\xmlenc\xmlenc\0.52\xmlenc-0.52.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-httpclient\commons-httpclient\3.1\commons-httpclient-3.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-io\commons-io\2.4\commons-io-2.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-collections\commons-collections\3.2.2\commons-collections-3.2.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-lang\commons-lang\2.6\commons-lang-2.6.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-configuration\commons-configuration\1.6\commons-configuration-1.6.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-digester\commons-digester\1.8\commons-digester-1.8.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-beanutils\commons-beanutils\1.7.0\commons-beanutils-1.7.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-beanutils\commons-beanutils-core\1.8.0\commons-beanutils-core-1.8.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\google\protobuf\protobuf-java\2.5.0\protobuf-java-2.5.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\google\code\gson\gson\2.2.4\gson-2.2.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-auth\2.6.5\hadoop-auth-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\directory\server\apacheds-kerberos-codec\2.0.0-M15\apacheds-kerberos-codec-2.0.0-M15.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\directory\server\apacheds-i18n\2.0.0-M15\apacheds-i18n-2.0.0-M15.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\directory\api\api-asn1-api\1.0.0-M20\api-asn1-api-1.0.0-M20.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\directory\api\api-util\1.0.0-M20\api-util-1.0.0-M20.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\curator\curator-client\2.6.0\curator-client-2.6.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\htrace\htrace-core\3.0.4\htrace-core-3.0.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-hdfs\2.6.5\hadoop-hdfs-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\mortbay\jetty\jetty-util\6.1.26\jetty-util-6.1.26.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\xerces\xercesImpl\2.9.1\xercesImpl-2.9.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\xml-apis\xml-apis\1.3.04\xml-apis-1.3.04.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-mapreduce-client-app\2.6.5\hadoop-mapreduce-client-app-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-mapreduce-client-common\2.6.5\hadoop-mapreduce-client-common-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-yarn-client\2.6.5\hadoop-yarn-client-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-yarn-server-common\2.6.5\hadoop-yarn-server-common-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-mapreduce-client-shuffle\2.6.5\hadoop-mapreduce-client-shuffle-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-yarn-api\2.6.5\hadoop-yarn-api-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-mapreduce-client-core\2.6.5\hadoop-mapreduce-client-core-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-yarn-common\2.6.5\hadoop-yarn-common-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\xml\bind\jaxb-api\2.2.2\jaxb-api-2.2.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\xml\stream\stax-api\1.0-2\stax-api-1.0-2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\codehaus\jackson\jackson-jaxrs\1.9.13\jackson-jaxrs-1.9.13.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\codehaus\jackson\jackson-xc\1.9.13\jackson-xc-1.9.13.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-mapreduce-client-jobclient\2.6.5\hadoop-mapreduce-client-jobclient-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\hadoop\hadoop-annotations\2.6.5\hadoop-annotations-2.6.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-launcher_2.11\2.3.0\spark-launcher_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-kvstore_2.11\2.3.0\spark-kvstore_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\fusesource\leveldbjni\leveldbjni-all\1.8\leveldbjni-all-1.8.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\fasterxml\jackson\core\jackson-core\2.6.7\jackson-core-2.6.7.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\fasterxml\jackson\core\jackson-annotations\2.6.7\jackson-annotations-2.6.7.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-network-common_2.11\2.3.0\spark-network-common_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-network-shuffle_2.11\2.3.0\spark-network-shuffle_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-unsafe_2.11\2.3.0\spark-unsafe_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\net\java\dev\jets3t\jets3t\0.9.4\jets3t-0.9.4.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\httpcomponents\httpcore\4.4.1\httpcore-4.4.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\httpcomponents\httpclient\4.5\httpclient-4.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-codec\commons-codec\1.15-SNAPSHOT\commons-codec-1.15-20200118.020329-6.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\activation\activation\1.1.1\activation-1.1.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\bouncycastle\bcprov-jdk15on\1.52\bcprov-jdk15on-1.52.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\jamesmurty\utils\java-xmlbuilder\1.1\java-xmlbuilder-1.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\net\iharder\base64\2.3.8\base64-2.3.8.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\curator\curator-recipes\2.6.0\curator-recipes-2.6.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\curator\curator-framework\2.6.0\curator-framework-2.6.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\zookeeper\zookeeper\3.4.6\zookeeper-3.4.6.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\google\guava\guava\16.0.1\guava-16.0.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\servlet\javax.servlet-api\3.1.0\javax.servlet-api-3.1.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\commons\commons-lang3\3.5\commons-lang3-3.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\commons\commons-math3\3.4.1\commons-math3-3.4.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\google\code\findbugs\jsr305\1.3.9\jsr305-1.3.9.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\slf4j\slf4j-api\1.7.16\slf4j-api-1.7.16.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\slf4j\jul-to-slf4j\1.7.16\jul-to-slf4j-1.7.16.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\slf4j\jcl-over-slf4j\1.7.16\jcl-over-slf4j-1.7.16.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\log4j\log4j\1.2.17\log4j-1.2.17.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\slf4j\slf4j-log4j12\1.7.16\slf4j-log4j12-1.7.16.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\ning\compress-lzf\1.0.3\compress-lzf-1.0.3.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\xerial\snappy\snappy-java\1.1.2.6\snappy-java-1.1.2.6.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\lz4\lz4-java\1.4.0\lz4-java-1.4.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\github\luben\zstd-jni\1.3.2-2\zstd-jni-1.3.2-2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\roaringbitmap\RoaringBitmap\0.5.11\RoaringBitmap-0.5.11.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\commons-net\commons-net\2.2\commons-net-2.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\scala-lang\scala-library\2.11.8\scala-library-2.11.8.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\json4s\json4s-jackson_2.11\3.2.11\json4s-jackson_2.11-3.2.11.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\json4s\json4s-core_2.11\3.2.11\json4s-core_2.11-3.2.11.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\json4s\json4s-ast_2.11\3.2.11\json4s-ast_2.11-3.2.11.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\scala-lang\scalap\2.11.0\scalap-2.11.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\scala-lang\scala-compiler\2.11.0\scala-compiler-2.11.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\scala-lang\modules\scala-xml_2.11\1.0.1\scala-xml_2.11-1.0.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\scala-lang\modules\scala-parser-combinators_2.11\1.0.1\scala-parser-combinators_2.11-1.0.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\core\jersey-client\2.22.2\jersey-client-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\ws\rs\javax.ws.rs-api\2.0.1\javax.ws.rs-api-2.0.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\hk2\hk2-api\2.4.0-b34\hk2-api-2.4.0-b34.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\hk2\hk2-utils\2.4.0-b34\hk2-utils-2.4.0-b34.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\hk2\external\aopalliance-repackaged\2.4.0-b34\aopalliance-repackaged-2.4.0-b34.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\hk2\external\javax.inject\2.4.0-b34\javax.inject-2.4.0-b34.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\hk2\hk2-locator\2.4.0-b34\hk2-locator-2.4.0-b34.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\javassist\javassist\3.18.1-GA\javassist-3.18.1-GA.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\core\jersey-common\2.22.2\jersey-common-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\annotation\javax.annotation-api\1.2\javax.annotation-api-1.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\bundles\repackaged\jersey-guava\2.22.2\jersey-guava-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\hk2\osgi-resource-locator\1.0.1\osgi-resource-locator-1.0.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\core\jersey-server\2.22.2\jersey-server-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\media\jersey-media-jaxb\2.22.2\jersey-media-jaxb-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\javax\validation\validation-api\1.1.0.Final\validation-api-1.1.0.Final.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\containers\jersey-container-servlet\2.22.2\jersey-container-servlet-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\glassfish\jersey\containers\jersey-container-servlet-core\2.22.2\jersey-container-servlet-core-2.22.2.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\io\netty\netty-all\4.1.17.Final\netty-all-4.1.17.Final.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\io\netty\netty\3.9.9.Final\netty-3.9.9.Final.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\clearspring\analytics\stream\2.7.0\stream-2.7.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\io\dropwizard\metrics\metrics-core\3.1.5\metrics-core-3.1.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\io\dropwizard\metrics\metrics-jvm\3.1.5\metrics-jvm-3.1.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\io\dropwizard\metrics\metrics-json\3.1.5\metrics-json-3.1.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\io\dropwizard\metrics\metrics-graphite\3.1.5\metrics-graphite-3.1.5.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\fasterxml\jackson\core\jackson-databind\2.6.7.1\jackson-databind-2.6.7.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\fasterxml\jackson\module\jackson-module-scala_2.11\2.6.7.1\jackson-module-scala_2.11-2.6.7.1.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\scala-lang\scala-reflect\2.11.8\scala-reflect-2.11.8.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\com\fasterxml\jackson\module\jackson-module-paranamer\2.7.9\jackson-module-paranamer-2.7.9.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\ivy\ivy\2.4.0\ivy-2.4.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\oro\oro\2.0.8\oro-2.0.8.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\net\razorvine\pyrolite\4.13\pyrolite-4.13.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\net\sf\py4j\py4j\0.10.6\py4j-0.10.6.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\spark\spark-tags_2.11\2.3.0\spark-tags_2.11-2.3.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\apache\commons\commons-crypto\1.0.0\commons-crypto-1.0.0.jar;D:\SoftWare\Maven\apache-maven-3.6.1\Repository\org\spark-project\spark\unused\1.0.0\unused-1.0.0.jar com.dtiantai.MyJavaWordCount D:\MyCode\testJavaAndScala\data\wc.txt D:\MyCode\testJavaAndScala\data\out Using Spark's default log4j profile: org/apache/spark/log4j-defaults.properties 20/01/19 18:14:04 INFO SparkContext: Running Spark version 2.3.0 20/01/19 18:14:04 INFO SparkContext: Submitted application: MyJavaWordCount 20/01/19 18:14:04 INFO SecurityManager: Changing view acls to: admin 20/01/19 18:14:04 INFO SecurityManager: Changing modify acls to: admin 20/01/19 18:14:04 INFO SecurityManager: Changing view acls groups to: 20/01/19 18:14:04 INFO SecurityManager: Changing modify acls groups to: 20/01/19 18:14:04 INFO SecurityManager: SecurityManager: authentication disabled; ui acls disabled; users with view permissions: Set(admin); groups with view permissions: Set(); users with modify permissions: Set(admin); groups with modify permissions: Set() 20/01/19 18:14:05 INFO Utils: Successfully started service 'sparkDriver' on port 52491. 20/01/19 18:14:05 INFO SparkEnv: Registering MapOutputTracker 20/01/19 18:14:05 INFO SparkEnv: Registering BlockManagerMaster 20/01/19 18:14:05 INFO BlockManagerMasterEndpoint: Using org.apache.spark.storage.DefaultTopologyMapper for getting topology information 20/01/19 18:14:05 INFO BlockManagerMasterEndpoint: BlockManagerMasterEndpoint up 20/01/19 18:14:05 INFO DiskBlockManager: Created local directory at C:\Users\admin\AppData\Local\Temp\blockmgr-f405ac37-c1ff-4a8a-a490-1144a192f758 20/01/19 18:14:05 INFO MemoryStore: MemoryStore started with capacity 902.7 MB 20/01/19 18:14:05 INFO SparkEnv: Registering OutputCommitCoordinator 20/01/19 18:14:05 INFO Utils: Successfully started service 'SparkUI' on port 4040. 20/01/19 18:14:05 INFO SparkUI: Bound SparkUI to 0.0.0.0, and started at http://GX-GYM-D8178:4040 20/01/19 18:14:05 INFO Executor: Starting executor ID driver on host localhost 20/01/19 18:14:05 INFO Utils: Successfully started service 'org.apache.spark.network.netty.NettyBlockTransferService' on port 52504. 20/01/19 18:14:05 INFO NettyBlockTransferService: Server created on GX-GYM-D8178:52504 20/01/19 18:14:05 INFO BlockManager: Using org.apache.spark.storage.RandomBlockReplicationPolicy for block replication policy 20/01/19 18:14:05 INFO BlockManagerMaster: Registering BlockManager BlockManagerId(driver, GX-GYM-D8178, 52504, None) 20/01/19 18:14:05 INFO BlockManagerMasterEndpoint: Registering block manager GX-GYM-D8178:52504 with 902.7 MB RAM, BlockManagerId(driver, GX-GYM-D8178, 52504, None) 20/01/19 18:14:05 INFO BlockManagerMaster: Registered BlockManager BlockManagerId(driver, GX-GYM-D8178, 52504, None) 20/01/19 18:14:05 INFO BlockManager: Initialized BlockManager: BlockManagerId(driver, GX-GYM-D8178, 52504, None) 20/01/19 18:14:06 INFO MemoryStore: Block broadcast_0 stored as values in memory (estimated size 214.5 KB, free 902.5 MB) 20/01/19 18:14:07 INFO MemoryStore: Block broadcast_0_piece0 stored as bytes in memory (estimated size 20.4 KB, free 902.5 MB) 20/01/19 18:14:07 INFO BlockManagerInfo: Added broadcast_0_piece0 in memory on GX-GYM-D8178:52504 (size: 20.4 KB, free: 902.7 MB) 20/01/19 18:14:07 INFO SparkContext: Created broadcast 0 from textFile at MyJavaWordCount.java:30 20/01/19 18:14:07 INFO FileInputFormat: Total input paths to process : 1 20/01/19 18:14:07 INFO deprecation: mapred.output.dir is deprecated. Instead, use mapreduce.output.fileoutputformat.outputdir 20/01/19 18:14:07 INFO SparkContext: Starting job: runJob at SparkHadoopWriter.scala:78 20/01/19 18:14:07 INFO DAGScheduler: Registering RDD 3 (mapToPair at MyJavaWordCount.java:41) 20/01/19 18:14:07 INFO DAGScheduler: Got job 0 (runJob at SparkHadoopWriter.scala:78) with 2 output partitions 20/01/19 18:14:07 INFO DAGScheduler: Final stage: ResultStage 1 (runJob at SparkHadoopWriter.scala:78) 20/01/19 18:14:07 INFO DAGScheduler: Parents of final stage: List(ShuffleMapStage 0) 20/01/19 18:14:07 INFO DAGScheduler: Missing parents: List(ShuffleMapStage 0) 20/01/19 18:14:07 INFO DAGScheduler: Submitting ShuffleMapStage 0 (MapPartitionsRDD[3] at mapToPair at MyJavaWordCount.java:41), which has no missing parents 20/01/19 18:14:07 INFO MemoryStore: Block broadcast_1 stored as values in memory (estimated size 5.1 KB, free 902.5 MB) 20/01/19 18:14:07 INFO MemoryStore: Block broadcast_1_piece0 stored as bytes in memory (estimated size 2.9 KB, free 902.5 MB) 20/01/19 18:14:07 INFO BlockManagerInfo: Added broadcast_1_piece0 in memory on GX-GYM-D8178:52504 (size: 2.9 KB, free: 902.7 MB) 20/01/19 18:14:07 INFO SparkContext: Created broadcast 1 from broadcast at DAGScheduler.scala:1039 20/01/19 18:14:07 INFO DAGScheduler: Submitting 2 missing tasks from ShuffleMapStage 0 (MapPartitionsRDD[3] at mapToPair at MyJavaWordCount.java:41) (first 15 tasks are for partitions Vector(0, 1)) 20/01/19 18:14:07 INFO TaskSchedulerImpl: Adding task set 0.0 with 2 tasks 20/01/19 18:14:07 INFO TaskSetManager: Starting task 0.0 in stage 0.0 (TID 0, localhost, executor driver, partition 0, PROCESS_LOCAL, 7880 bytes) 20/01/19 18:14:07 INFO TaskSetManager: Starting task 1.0 in stage 0.0 (TID 1, localhost, executor driver, partition 1, PROCESS_LOCAL, 7880 bytes) 20/01/19 18:14:07 INFO Executor: Running task 1.0 in stage 0.0 (TID 1) 20/01/19 18:14:07 INFO Executor: Running task 0.0 in stage 0.0 (TID 0) 20/01/19 18:14:07 INFO HadoopRDD: Input split: file:/D:/MyCode/testJavaAndScala/data/wc.txt:0+32 20/01/19 18:14:07 INFO HadoopRDD: Input split: file:/D:/MyCode/testJavaAndScala/data/wc.txt:32+33 20/01/19 18:14:07 INFO Executor: Finished task 1.0 in stage 0.0 (TID 1). 1154 bytes result sent to driver 20/01/19 18:14:07 INFO Executor: Finished task 0.0 in stage 0.0 (TID 0). 1111 bytes result sent to driver 20/01/19 18:14:07 INFO TaskSetManager: Finished task 0.0 in stage 0.0 (TID 0) in 194 ms on localhost (executor driver) (1/2) 20/01/19 18:14:07 INFO TaskSetManager: Finished task 1.0 in stage 0.0 (TID 1) in 182 ms on localhost (executor driver) (2/2) 20/01/19 18:14:07 INFO TaskSchedulerImpl: Removed TaskSet 0.0, whose tasks have all completed, from pool 20/01/19 18:14:07 INFO DAGScheduler: ShuffleMapStage 0 (mapToPair at MyJavaWordCount.java:41) finished in 0.310 s 20/01/19 18:14:07 INFO DAGScheduler: looking for newly runnable stages 20/01/19 18:14:07 INFO DAGScheduler: running: Set() 20/01/19 18:14:07 INFO DAGScheduler: waiting: Set(ResultStage 1) 20/01/19 18:14:07 INFO DAGScheduler: failed: Set() 20/01/19 18:14:07 INFO DAGScheduler: Submitting ResultStage 1 (MapPartitionsRDD[5] at saveAsTextFile at MyJavaWordCount.java:54), which has no missing parents 20/01/19 18:14:07 INFO MemoryStore: Block broadcast_2 stored as values in memory (estimated size 65.5 KB, free 902.4 MB) 20/01/19 18:14:07 INFO MemoryStore: Block broadcast_2_piece0 stored as bytes in memory (estimated size 23.4 KB, free 902.4 MB) 20/01/19 18:14:07 INFO BlockManagerInfo: Added broadcast_2_piece0 in memory on GX-GYM-D8178:52504 (size: 23.4 KB, free: 902.7 MB) 20/01/19 18:14:07 INFO SparkContext: Created broadcast 2 from broadcast at DAGScheduler.scala:1039 20/01/19 18:14:07 INFO DAGScheduler: Submitting 2 missing tasks from ResultStage 1 (MapPartitionsRDD[5] at saveAsTextFile at MyJavaWordCount.java:54) (first 15 tasks are for partitions Vector(0, 1)) 20/01/19 18:14:07 INFO TaskSchedulerImpl: Adding task set 1.0 with 2 tasks 20/01/19 18:14:07 INFO TaskSetManager: Starting task 0.0 in stage 1.0 (TID 2, localhost, executor driver, partition 0, ANY, 7649 bytes) 20/01/19 18:14:07 INFO TaskSetManager: Starting task 1.0 in stage 1.0 (TID 3, localhost, executor driver, partition 1, ANY, 7649 bytes) 20/01/19 18:14:07 INFO Executor: Running task 0.0 in stage 1.0 (TID 2) 20/01/19 18:14:07 INFO Executor: Running task 1.0 in stage 1.0 (TID 3) 20/01/19 18:14:07 INFO ShuffleBlockFetcherIterator: Getting 1 non-empty blocks out of 2 blocks 20/01/19 18:14:07 INFO ShuffleBlockFetcherIterator: Getting 2 non-empty blocks out of 2 blocks 20/01/19 18:14:07 INFO ShuffleBlockFetcherIterator: Started 0 remote fetches in 8 ms 20/01/19 18:14:07 INFO ShuffleBlockFetcherIterator: Started 0 remote fetches in 4 ms 20/01/19 18:14:08 INFO FileOutputCommitter: Saved output of task 'attempt_20200119181407_0005_m_000000_0' to file:/D:/MyCode/testJavaAndScala/data/out/_temporary/0/task_20200119181407_0005_m_000000 20/01/19 18:14:08 INFO SparkHadoopMapRedUtil: attempt_20200119181407_0005_m_000000_0: Committed 20/01/19 18:14:08 INFO FileOutputCommitter: Saved output of task 'attempt_20200119181407_0005_m_000001_0' to file:/D:/MyCode/testJavaAndScala/data/out/_temporary/0/task_20200119181407_0005_m_000001 20/01/19 18:14:08 INFO SparkHadoopMapRedUtil: attempt_20200119181407_0005_m_000001_0: Committed 20/01/19 18:14:08 INFO Executor: Finished task 0.0 in stage 1.0 (TID 2). 1502 bytes result sent to driver 20/01/19 18:14:08 INFO Executor: Finished task 1.0 in stage 1.0 (TID 3). 1502 bytes result sent to driver 20/01/19 18:14:08 INFO TaskSetManager: Finished task 1.0 in stage 1.0 (TID 3) in 172 ms on localhost (executor driver) (1/2) 20/01/19 18:14:08 INFO TaskSetManager: Finished task 0.0 in stage 1.0 (TID 2) in 174 ms on localhost (executor driver) (2/2) 20/01/19 18:14:08 INFO TaskSchedulerImpl: Removed TaskSet 1.0, whose tasks have all completed, from pool 20/01/19 18:14:08 INFO DAGScheduler: ResultStage 1 (runJob at SparkHadoopWriter.scala:78) finished in 0.205 s 20/01/19 18:14:08 INFO DAGScheduler: Job 0 finished: runJob at SparkHadoopWriter.scala:78, took 0.652025 s 20/01/19 18:14:08 INFO SparkHadoopWriter: Job job_20200119181407_0005 committed. 20/01/19 18:14:08 INFO SparkUI: Stopped Spark web UI at http://GX-GYM-D8178:4040 20/01/19 18:14:08 INFO MapOutputTrackerMasterEndpoint: MapOutputTrackerMasterEndpoint stopped! 20/01/19 18:14:08 INFO MemoryStore: MemoryStore cleared 20/01/19 18:14:08 INFO BlockManager: BlockManager stopped 20/01/19 18:14:08 INFO BlockManagerMaster: BlockManagerMaster stopped 20/01/19 18:14:08 INFO OutputCommitCoordinator$OutputCommitCoordinatorEndpoint: OutputCommitCoordinator stopped! 20/01/19 18:14:08 INFO SparkContext: Successfully stopped SparkContext 20/01/19 18:14:08 INFO ShutdownHookManager: Shutdown hook called 20/01/19 18:14:08 INFO ShutdownHookManager: Deleting directory C:\Users\admin\AppData\Local\Temp\spark-09da7888-77f6-41df-b053-d9cc5d3f1b2b Process finished with exit code 0

查看输出结果

来源:https://www.cnblogs.com/braveym/p/12215142.html