文章目录

pytorch 神经网络基础

numpy torch 对比

#对比numpy与pytorch

#2020/2/12

import torch

import numpy as np

np_data = np.arange(6).reshape((2, 3))

torch_data = torch.from_numpy(np_data)

print(

'\nnumpy', np_data,

'\ntorch', torch_data,

)

tensor2array = torch_data.numpy()

print('\ntensor2array', tensor2array)

data = [1, -2, -3, 4]

tensor = torch.FloatTensor(data)

tensor = tensor.reshape(((2,2)))

print(

np.abs(tensor),

'\n',

torch.mm(tensor, tensor)

)

Variable变量

#Variable变量

#2020/2/12

import torch

from torch.autograd import Variable

tensor = torch.FloatTensor([[1, 2], [3, 4]])

variable = Variable(tensor, requires_grad=True) #requires_grad为真,可以进行反向传播(计算梯度值)

t_out = torch.mean(tensor*tensor)

v_out = torch.mean(variable*variable)

print(

t_out,

'\n', v_out

)

v_out.backward()

print(variable.grad)

print(variable.data.numpy())

Activaton 激励函数

-

神经网络中的每个神经元节点接受上一层神经元的输出值作为本神经元的输入值,并将输入值传递给下一层,输入层神经元节点会将输入属性值直接传递给下一层(隐层或输出层)。在多层神经网络中,上层节点的输出和下层节点的输入之间具有一个函数关系,这个函数称为激活函数(又称激励函数)****。

-

Relu

-

sigmoid

-

tanh

-

softplus

#激励函数(Activation)

#2020/2/13

import torch

import torch.nn.functional as F

from torch.autograd import Variable

import matplotlib.pyplot as plt

# fake data

x = torch.linspace(-5, 5, 200)

x = Variable(x)

x_np = x.data.numpy()

y_relu = torch.relu(x).data.numpy()

y_sigmoid = torch.sigmoid(x).data.numpy()

y_tanh = torch.tanh(x).data.numpy()

y_softplus = F.softplus(x).data.numpy()

plt.figure(1, figsize=(8, 6))

plt.subplot(221)

plt.plot(x_np, y_relu, c='red', label='relu')

plt.ylim((-1, 5))

plt.legend(loc='best')

plt.subplot(222)

plt.plot(x_np, y_sigmoid, c='red', label='sigmoid')

plt.ylim((-0.2, 1.2))

plt.legend(loc='best')

plt.subplot(223)

plt.plot(x_np, y_tanh, c='red', label='tanh')

plt.ylim((-1.2, 1.2))

plt.legend(loc='best')

plt.subplot(224)

plt.plot(x_np, y_softplus, c='red', label='softplus')

plt.ylim((-0.2, 6))

plt.legend(loc='best')

plt.show()

建造第一个神经网络

Regression回归

#Regression回归

#2020/2/13

"""

View more, visit my tutorial page: https://morvanzhou.github.io/tutorials/

My Youtube Channel: https://www.youtube.com/user/MorvanZhou

Dependencies:

torch: 0.4

matplotlib

"""

import torch

import torch.nn.functional as F

import matplotlib.pyplot as plt

# torch.manual_seed(1) # reproducible

x = torch.unsqueeze(torch.linspace(-1, 1, 100), dim=1) # torch中只会处理2维的数据,torch.unsqueeze(解压即维度补充)

y = x.pow(2) + 0.2*torch.rand(x.size()) # noisy y data (tensor), shape=(100, 1)

# torch can only train on Variable, so convert them to Variable

# The code below is deprecated in Pytorch 0.4. Now, autograd directly supports tensors

# x, y = Variable(x), Variable(y)

# plt.scatter(x.data.numpy(), y.data.numpy())

# plt.show()

class Net(torch.nn.Module):

def __init__(self, n_feature, n_hidden, n_output):

super(Net, self).__init__()

self.hidden = torch.nn.Linear(n_feature, n_hidden) # hidden layer

self.predict = torch.nn.Linear(n_hidden, n_output) # output layer

def forward(self, x):

x = F.relu(self.hidden(x)) # activation function for hidden layer

x = self.predict(x) # linear output

return x

net = Net(n_feature=1, n_hidden=10, n_output=1) # define the network

print(net) # net architecture

optimizer = torch.optim.SGD(net.parameters(), lr=0.2)

loss_func = torch.nn.MSELoss() # this is for regression mean squared loss

plt.ion() # something about plotting

for t in range(200):

prediction = net(x) # input x and predict based on x

loss = loss_func(prediction, y) # must be (1. nn output, 2. target)

optimizer.zero_grad() # clear gradients for next train

loss.backward() # backpropagation, compute gradients

optimizer.step() # apply gradients

if t % 5 == 0:

# plot and show learning process

plt.cla()

plt.scatter(x.data.numpy(), y.data.numpy())

plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5)

plt.text(0.5, 0, 'Loss=%.4f' % loss.data.numpy(), fontdict={'size': 20, 'color': 'red'})

plt.pause(0.1)

plt.ioff()

plt.show()

Classification分类

#Classification分类

#2020/2/13

"""

View more, visit my tutorial page: https://morvanzhou.github.io/tutorials/

My Youtube Channel: https://www.youtube.com/user/MorvanZhou

Dependencies:

torch: 0.4

matplotlib

"""

import torch

import torch.nn.functional as F

import matplotlib.pyplot as plt

# torch.manual_seed(1) # reproducible

# make fake data

n_data = torch.ones(100, 2)

x0 = torch.normal(2*n_data, 1) # class0 x data (tensor), shape=(100, 2)

y0 = torch.zeros(100) # class0 y data (tensor), shape=(100, 1)

x1 = torch.normal(-2*n_data, 1) # class1 x data (tensor), shape=(100, 2)

y1 = torch.ones(100) # class1 y data (tensor), shape=(100, 1)

x = torch.cat((x0, x1), 0).type(torch.FloatTensor) # shape (200, 2) FloatTensor = 32-bit floating

y = torch.cat((y0, y1), ).type(torch.LongTensor) # shape (200,) LongTensor = 64-bit integer

# The code below is deprecated in Pytorch 0.4. Now, autograd directly supports tensors

# x, y = Variable(x), Variable(y)

# plt.scatter(x.data.numpy()[:, 0], x.data.numpy()[:, 1], c=y.data.numpy(), s=100, lw=0, cmap='RdYlGn')

# plt.show()

class Net(torch.nn.Module):

def __init__(self, n_feature, n_hidden, n_output):

super(Net, self).__init__()

self.hidden = torch.nn.Linear(n_feature, n_hidden) # hidden layer

self.out = torch.nn.Linear(n_hidden, n_output) # output layer

def forward(self, x):

x = F.relu(self.hidden(x)) # activation function for hidden layer

x = self.out(x)

return x

net = Net(n_feature=2, n_hidden=10, n_output=2) # define the network

print(net) # net architecture

optimizer = torch.optim.SGD(net.parameters(), lr=0.02)

loss_func = torch.nn.CrossEntropyLoss() # the target label is NOT an one-hotted CrossEntropyLoss适用于分类问题

plt.ion() # something about plotting

for t in range(100):

out = net(x) # input x and predict based on x

loss = loss_func(out, y) # must be (1. nn output, 2. target), the target label is NOT one-hotted

optimizer.zero_grad() # clear gradients for next train

loss.backward() # backpropagation, compute gradients

optimizer.step() # apply gradients

if t % 2 == 0:

# plot and show learning process

plt.cla()

prediction = torch.max(out, 1)[1]

pred_y = prediction.data.numpy()

target_y = y.data.numpy()

plt.scatter(x.data.numpy()[:, 0], x.data.numpy()[:, 1], c=pred_y, s=100, lw=0, cmap='RdYlGn')

accuracy = float((pred_y == target_y).astype(int).sum()) / float(target_y.size)

plt.text(1.5, -4, 'Accuracy=%.2f' % accuracy, fontdict={'size': 20, 'color': 'red'})

plt.pause(0.1)

plt.ioff()

plt.show()

快速搭建法

net2 = torch.nn.Sequential( #按顺序,一层一层搭建

torch.nn.Linear(1, 10),

torch.nn.ReLU(),

torch.nn.Linear(10, 1)

)

保存提取

"""

View more, visit my tutorial page: https://morvanzhou.github.io/tutorials/

My Youtube Channel: https://www.youtube.com/user/MorvanZhou

Dependencies:

torch: 0.4

matplotlib

"""

import torch

import matplotlib.pyplot as plt

# torch.manual_seed(1) # reproducible

# fake data

x = torch.unsqueeze(torch.linspace(-1, 1, 100), dim=1) # x data (tensor), shape=(100, 1)

y = x.pow(2) + 0.2*torch.rand(x.size()) # noisy y data (tensor), shape=(100, 1)

# The code below is deprecated in Pytorch 0.4. Now, autograd directly supports tensors

# x, y = Variable(x, requires_grad=False), Variable(y, requires_grad=False)

def save():

# save net1

net1 = torch.nn.Sequential(

torch.nn.Linear(1, 10),

torch.nn.ReLU(),

torch.nn.Linear(10, 1)

)

optimizer = torch.optim.SGD(net1.parameters(), lr=0.5)

loss_func = torch.nn.MSELoss()

for t in range(100):

prediction = net1(x)

loss = loss_func(prediction, y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

# plot result

plt.figure(1, figsize=(10, 3))

plt.subplot(131)

plt.title('Net1')

plt.scatter(x.data.numpy(), y.data.numpy())

plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5)

# 2 ways to save the net

torch.save(net1, 'net.pkl') # save entire net

torch.save(net1.state_dict(), 'net_params.pkl') # save only the parameters

def restore_net():

# restore entire net1 to net2

net2 = torch.load('net.pkl')

prediction = net2(x)

# plot result

plt.subplot(132)

plt.title('Net2')

plt.scatter(x.data.numpy(), y.data.numpy())

plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5)

def restore_params():

# restore only the parameters in net1 to net3

net3 = torch.nn.Sequential(

torch.nn.Linear(1, 10),

torch.nn.ReLU(),

torch.nn.Linear(10, 1)

)

# copy net1's parameters into net3

net3.load_state_dict(torch.load('net_params.pkl'))

prediction = net3(x)

# plot result

plt.subplot(133)

plt.title('Net3')

plt.scatter(x.data.numpy(), y.data.numpy())

plt.plot(x.data.numpy(), prediction.data.numpy(), 'r-', lw=5)

plt.show()

# save net1

save()

# restore entire net (may slow)

restore_net()

# restore only the net parameters

restore_params()

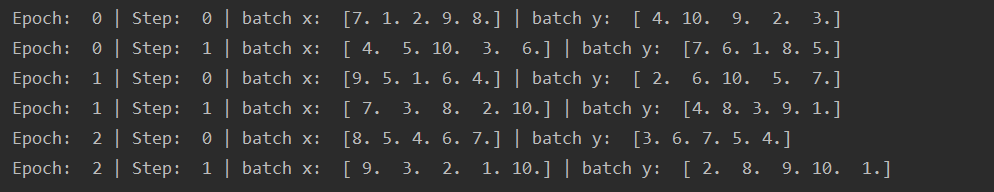

批数据训练

import torch

import torch.utils.data as Data

BATCH_SIZE = 5

x = torch.linspace(1, 10, 10)

y = torch.linspace(10, 1, 10)

torch_dataset = Data.TensorDataset(x, y)

loader = Data.DataLoader(

dataset=torch_dataset,

batch_size=BATCH_SIZE,

shuffle=True,

)

for epoch in range(3):

for step, (batch_x, batch_y) in enumerate(loader):

print('Epoch: ', epoch, '| Step: ', step, '| batch x: ',

batch_x.numpy(), '| batch y: ', batch_y.numpy())

优化器 Optimizer 加速神经网络训练(深度学习)

- torch.optim

- SGD(Stochastic Gradient Descent)

SGD由于是采用根据训练集一部分数据更换着去更新参数.SGD最大的缺点是下降速度慢,而且可能会在沟壑的两边持续震荡,停留在一个局部最优点。 - Momentum

Momentum 梯度下降法,就是计算了梯度的指数加权平均数,并以此来更新权重,它的运行速度几乎总是快于标准的梯度下降算法。(~越陡的地方受惯性作用下降越快,下坡) - AdaGrad

对于经常更新的参数,我们已经积累了大量关于它的知识,不希望被单个样本影响太大,希望学习速率慢一些;对于偶尔更新的参数,我们了解的信息太少,希望能从每个偶然出现的样本身上多学一些,即学习速率大一些。(改进错误方向,破鞋子) - RMSprop

下坡+破鞋子(差点) - Adam

Momentum+AdaGrad

import torch

import torch.utils.data as Data

import torch.nn.functional as F

from torch.autograd import Variable

import matplotlib.pyplot as plt

# hyper parameters

LR = 0.01

BATCH_SIZE = 32

EPOCH = 12

x = torch.unsqueeze(torch.linspace(-1, 1, 1000), dim=1)

y = x.pow(2) + 0.1*torch.normal(torch.zeros(*x.size()))

# # plot dataset

# plt.scatter(x.numpy(), y.numpy())

# plt.show()

torch_dataset = Data.TensorDataset(x, y)

loader = Data.DataLoader(dataset=torch_dataset, batch_size=BATCH_SIZE, shuffle=True)

# default network

class Net(torch.nn.Module):

def __init__(self):

super(Net, self).__init__()

self.hidden = torch.nn.Linear(1, 20)

self.predict = torch.nn.Linear(20, 1)

def forward(self, x):

x = F.relu(self.hidden(x))

x = self.predict(x)

return x

net_SGD = Net()

net_Momentum = Net()

net_RMSprop = Net()

net_Adam = Net()

nets = [net_SGD, net_Momentum, net_RMSprop, net_Adam]

opt_SGD = torch.optim.SGD(net_SGD.parameters(), lr=LR)

opt_Momentum = torch.optim.SGD(net_Momentum.parameters(), lr=LR, momentum=0.8)

opt_RMSprop = torch.optim.RMSprop(net_RMSprop.parameters(), lr=LR, alpha=0.9)

opt_Adam = torch.optim.Adam(net_Adam.parameters(), lr=LR, betas=(0.9, 0.99))

optimizers = [opt_SGD, opt_Momentum, opt_RMSprop, opt_Adam]

loss_func = torch.nn.MSELoss()

losses_his = [[], [], [], []]

for epoch in range(EPOCH):

for step, (batch_x, batch_y) in enumerate(loader):

b_x = Variable(batch_x)

b_y = Variable(batch_y)

for net, opt, l_his in zip(nets, optimizers, losses_his):

output = net(b_x)

loss = loss_func(output, b_y)

opt.zero_grad()

loss.backward()

opt.step()

l_his.append(loss.data.numpy())

labels = ['SGD', 'Momentum', 'RMSprop', 'Adam']

for i, l_his in enumerate(losses_his):

plt.plot(l_his, label=labels[i])

plt.legend(loc='best')

plt.xlabel('Steps')

plt.ylabel('Loss')

plt.ylim((0, 0.2))

plt.show()

高级神经网络结构

卷积神经网络CNN

- 常见结构

image->convolution->max pooling->convolution->max pooling->fully connected->fully connected->classifier

卷积网络中一个典型层包含三级(卷积+激活+池化)。在第一级中这一层并行地计算多个卷积产生一组线性激活响应;在第二级中,每一个线性激活响应将会通过一个非线性的激活函数,例如整流线性激活函数,这一级也被称为探测级;在第三级中,我们使用池化函数来进一步调整这一层的输出。

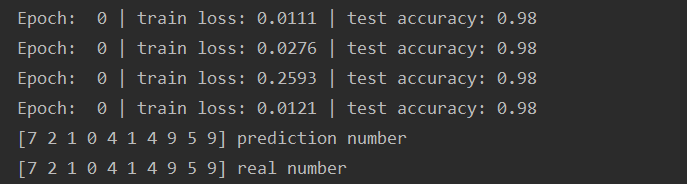

CNN

- torch.nn

- 输出尺寸=(输入尺寸-filter尺寸+2*padding)/stride+1

- nn.Conv2d

- nn.MaxPool2d

import torch

import torch.nn as nn

from torch.autograd import Variable

import torch.utils.data as Data

import torchvision

import matplotlib.pyplot as plt

# Hyper Parameters

EPOCH = 1

BATCH_SIZE = 50

LR = 0.001

DOWNLOAD_MINST = False

train_data = torchvision.datasets.MNIST(

root='./mnist',

train=True,

transform=torchvision.transforms.ToTensor(),

download=DOWNLOAD_MINST

)

# # plot one example

# print(train_data.train_data.size())

# print(train_data.train_labels.size())

# plt.imshow(train_data.train_data[0].numpy(), cmap='gray')

# plt.title('%i' % train_data.train_labels[0])

# plt.show()

train_loader = Data.DataLoader(dataset=train_data, batch_size=BATCH_SIZE, shuffle=True)

test_data = torchvision.datasets.MNIST(root='./mnist', train=False)

test_x = Variable(torch.unsqueeze(test_data.test_data, dim=1)).type(torch.FloatTensor)[:2000]/255

test_y = test_data.test_labels[:2000]

class CNN(nn.Module):

def __init__(self):

super(CNN, self).__init__()

self.conv1 = nn.Sequential(

nn.Conv2d( #例如25*25图中 #(1, 28, 28)

in_channels=1, # Number of channels in the input image

out_channels=16, # Number of channels produced by the convolution

kernel_size=5, # 5*5个扫描一次 Size of the convolving kernel

stride=1, # 步幅每1个像素移动 Stride of the convolution. Default: 1

padding=2, # if stride = 1, padding = (kernel_size-1)/2 Zero-padding added to both sides of the input. Default: 0

), # -> (16, 28, 28)

nn.ReLU(),

nn.MaxPool2d(kernel_size=2), # 取卷积后最重要特征 # -> (16, 14, 14)

)

self.conv2 = nn.Sequential(

nn.Conv2d(16, 32, 5, 1, 2),

nn.ReLU(),

nn.MaxPool2d(kernel_size=2) # -> (32, 7, 7)

)

self.out = nn.Linear(32*7*7, 10)

def forward(self, x):

x = self.conv1(x)

x = self.conv2(x) # (batch, 32, 7, 7)

x = x.view(x.size(0), -1) # (batch, 32*7*7)

output = self.out(x)

return output

cnn = CNN()

# print(cnn)

optimizer = torch.optim.Adam(cnn.parameters(), lr=LR)

loss_func = nn.CrossEntropyLoss()

# training and testing

for epoch in range(EPOCH):

for step, (x, y) in enumerate(train_loader):

b_x = Variable(x)

b_y = Variable(y)

output = cnn(b_x)

loss = loss_func(output, b_y)

optimizer.zero_grad()

loss.backward()

optimizer.step()

if step % 50 == 0:

test_output = cnn(test_x)

pred_y = torch.max(test_output, 1)[1].data.numpy()

accuracy = float((pred_y == test_y.data.numpy()).astype(int).sum()) / float(test_y.size(0))

print('Epoch: ', epoch, '| train loss: %.4f' % loss.data.numpy(), '| test accuracy: %.2f' % accuracy)

# print 10 predictions from test data

test_output = cnn(test_x[:10])

pred_y = torch.max(test_output, 1)[1].data.numpy().squeeze()

print(pred_y, 'prediction number')

print(test_y[:10].numpy(), 'real number')

来源:CSDN

作者:&Amaris

链接:https://blog.csdn.net/weixin_43488958/article/details/104286603